AWS Amplify is the fastest and easiest way to build cloud-powered mobile and web apps on AWS. Amplify comprises a set of tools and services that enables front-end web and mobile developers to leverage the power of AWS services to build innovative and feature-rich applications.

Chrome extensions are a great way to build mini applications that utilize the browser functionality to serve content to users. These extensions provide the means for additional functionality by allowing ways to configure and embed information into a webpage. When combining the power of AWS cloud-based services and Chrome browser functionality, we can customize applications to increase productivity and decrease development time.

Chrome extension

The following example outlines a use case of adding an extension to a specific URL/webpage. This allows users who are using the extension to add images. For example, if we would like to take a screenshot of text or activities on the page that allows users to store the images, then the user has a catalog of images that they can refer back to upon revisiting the page. The extension harnesses the Amplify-provided resource by adding an Amazon Simple Storage Service (Amazon S3) bucket to store the images and Amplify UI component to display the images.

A GIF that shows the final demonstration of the Chrome extension that displays the image of a cat

The application utilizes the latest chrome manifest V3 to create an extension, and it’s developed using the React JavaScript library with Amplify resources to provide functionality.

The Chrome extension manifest is an entry point that contains three main components.

- The Popup scripts

- Content scripts

- Background scripts

The Popup scripts are elements visible to the user when they select an extension. Content scripts are elements that run in a web page that let us make, modify, or add functionality to the web pages. Background scripts or service workers are event-based programs that let us monitor and send messages to other scripts.

In this application, we’ll be utilizing the Content script to modify a web page by adding an upload button and display an image.

For detailed information refer to the Chrome Documentation.

AWS Amplify

AWS Amplify is a set of purpose-built tools and features that lets frontend web and mobile developers quickly and easily build full-stack applications on AWS. This also includes the flexibility to leverage the breadth of AWS services as your use cases evolve.

The Amplify Command Line Interface (CLI) is a unified toolchain to create, integrate, and manage the AWS cloud services for your app.

We’ll utilize Amplify CLI to add Amazon S3 storage and Authentication capabilities. Additionally, we’ll use Amplify UI components to add a picker component.

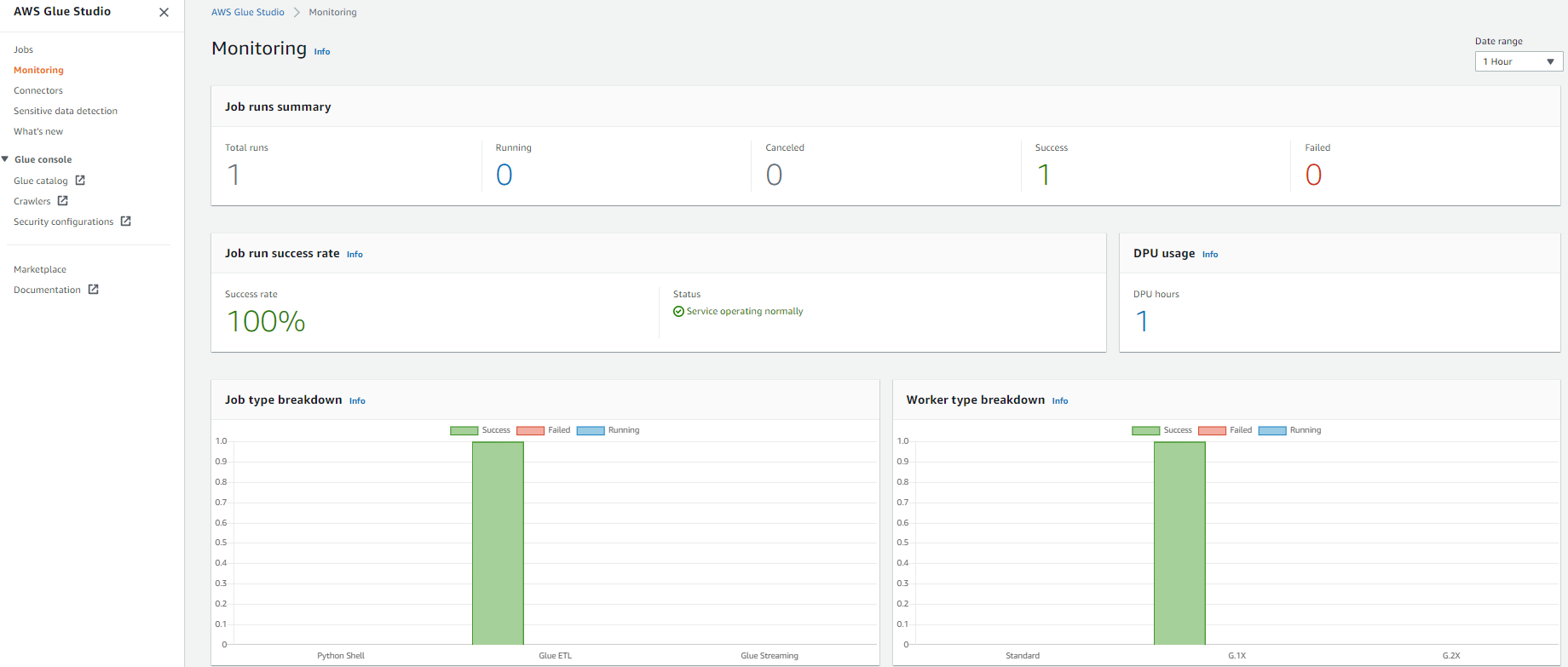

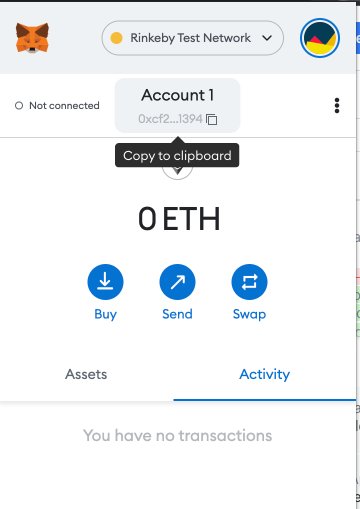

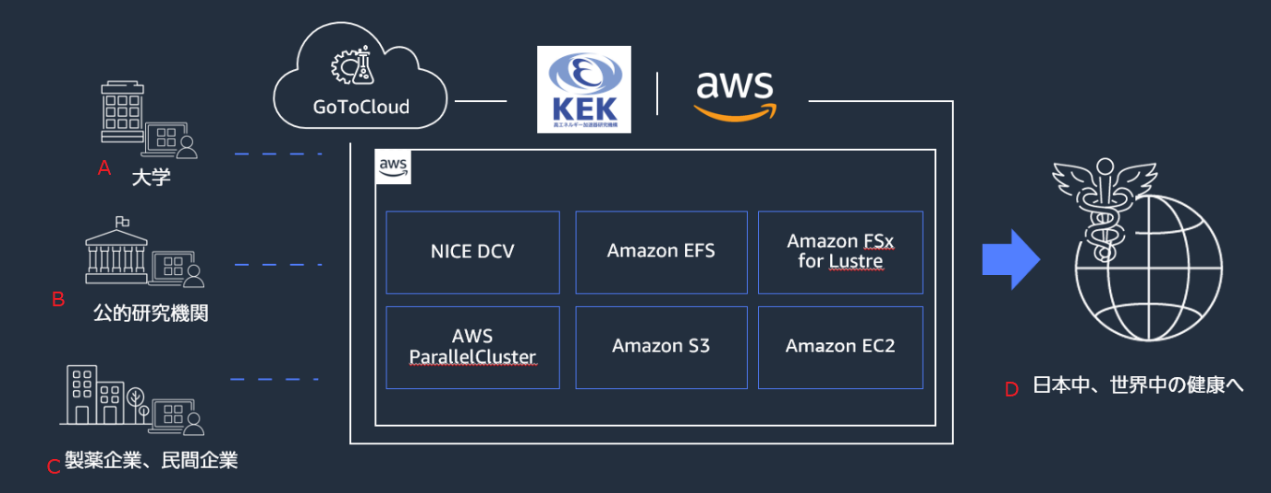

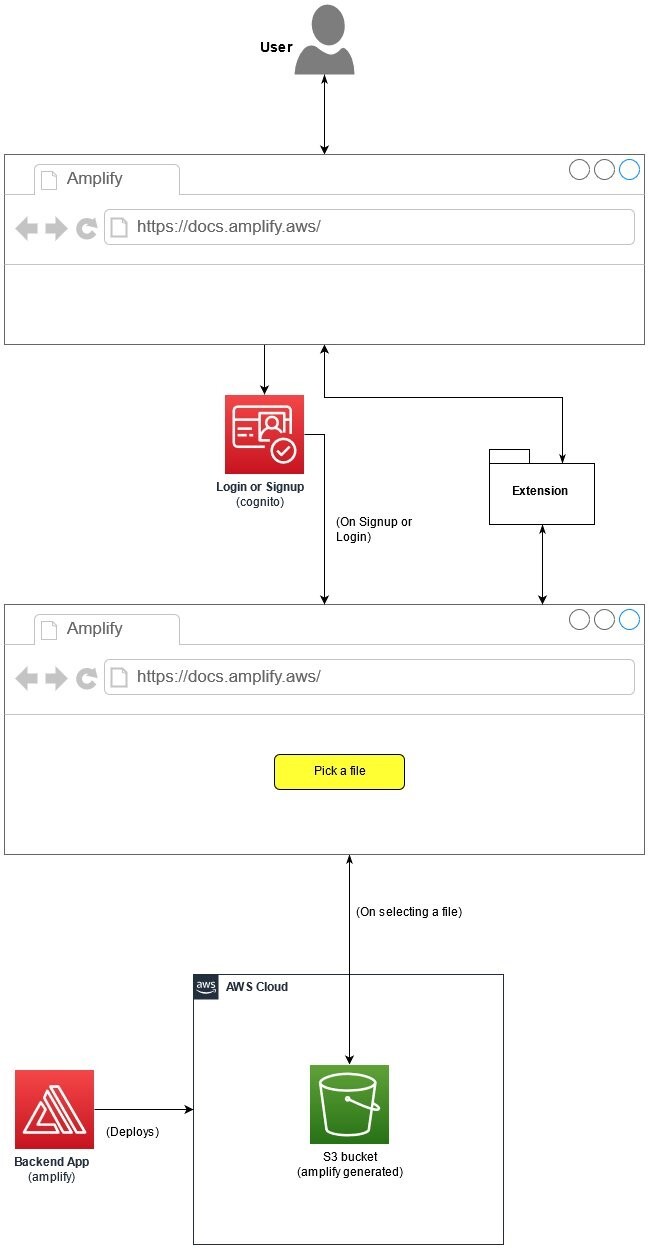

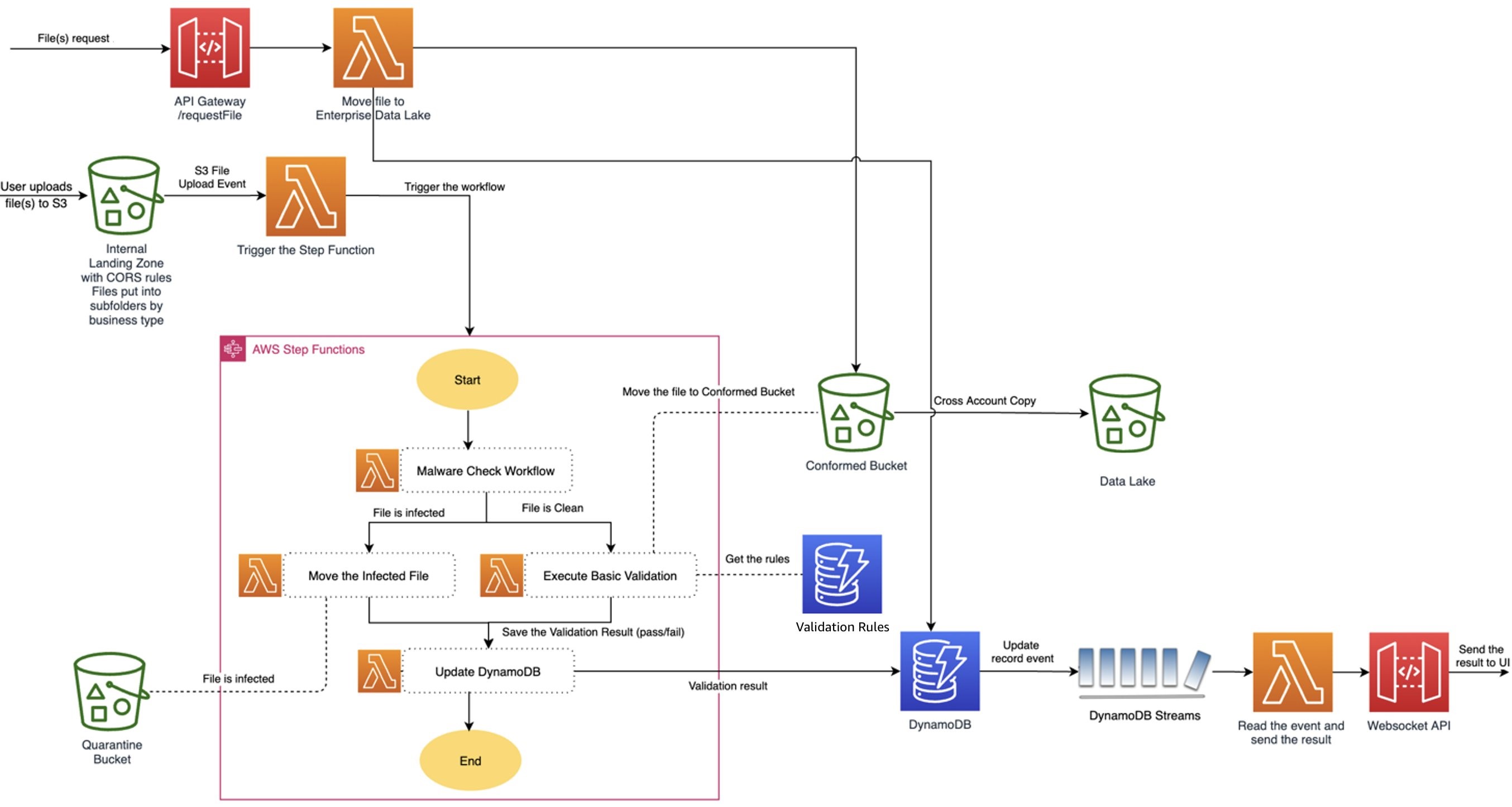

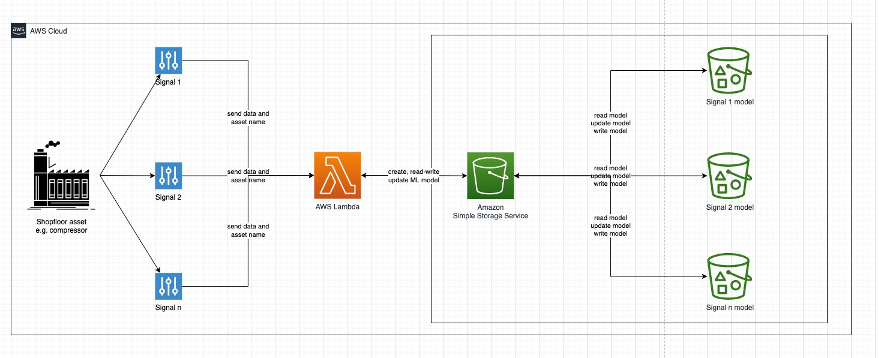

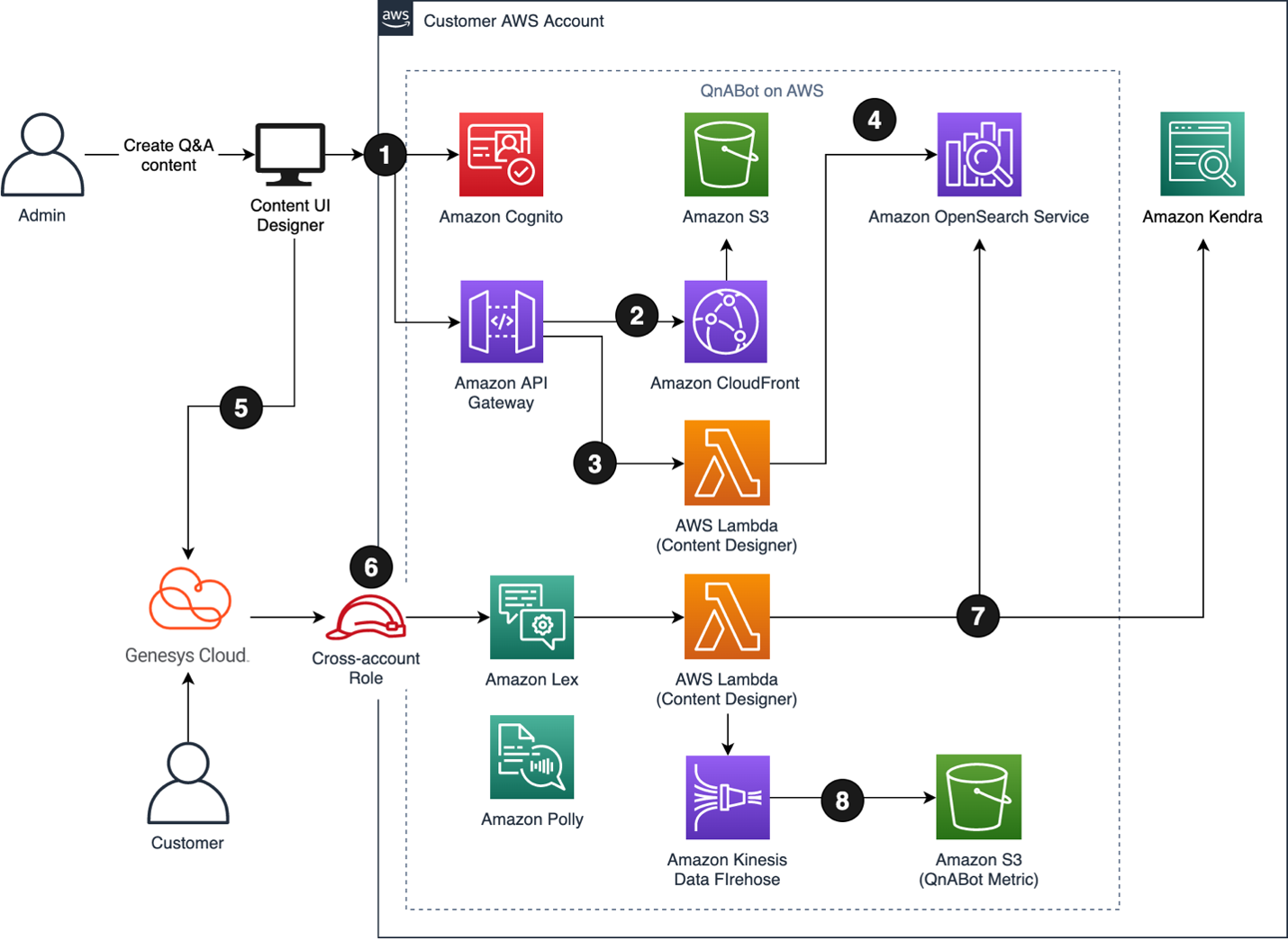

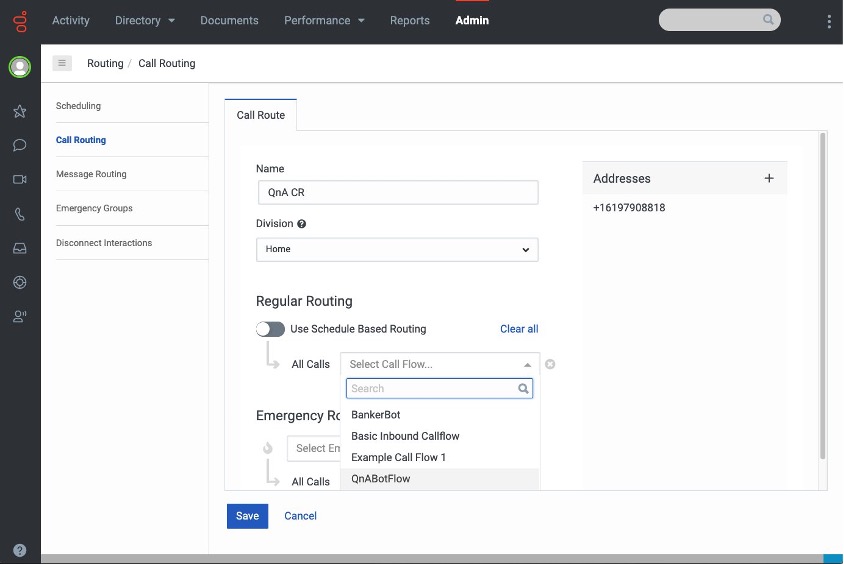

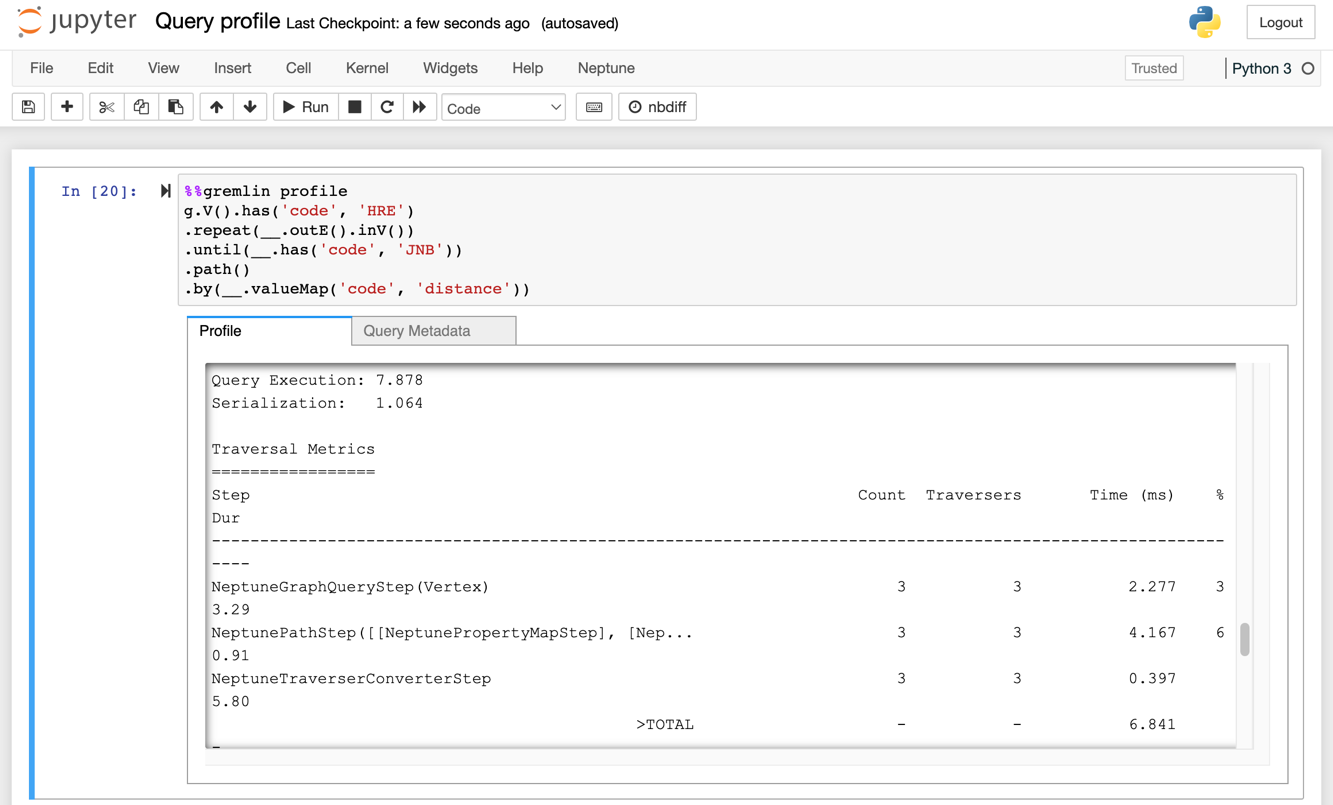

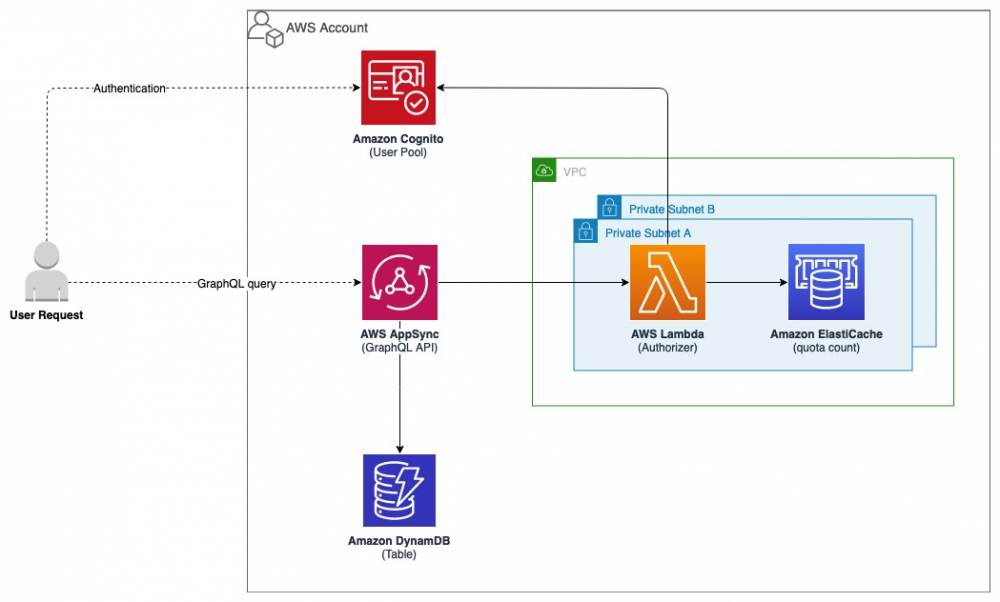

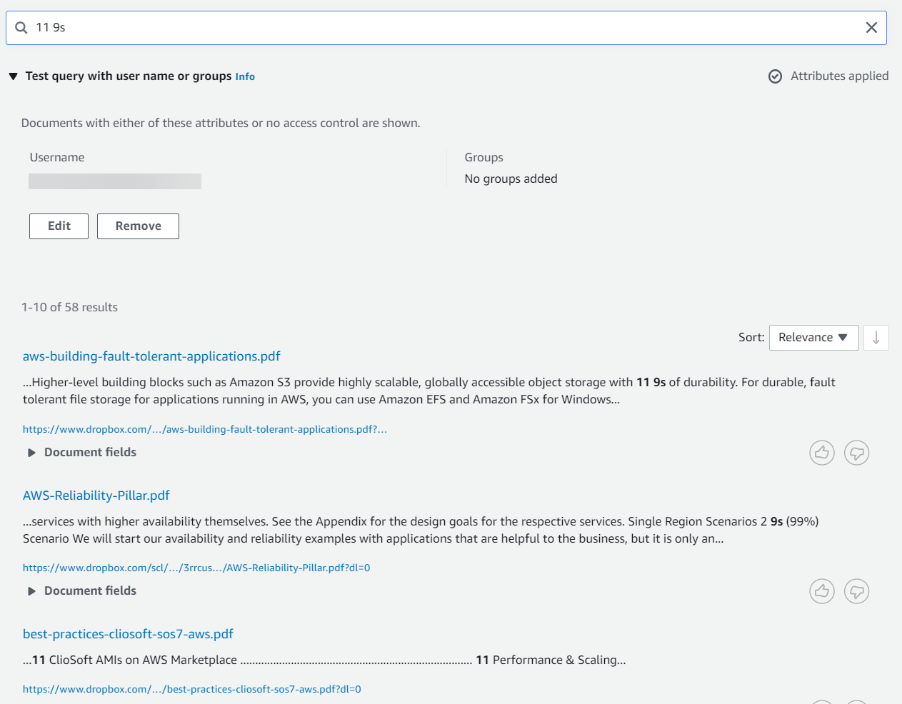

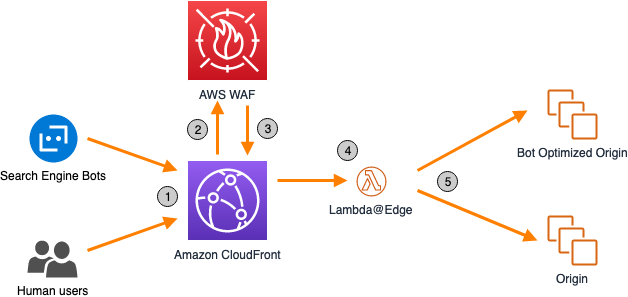

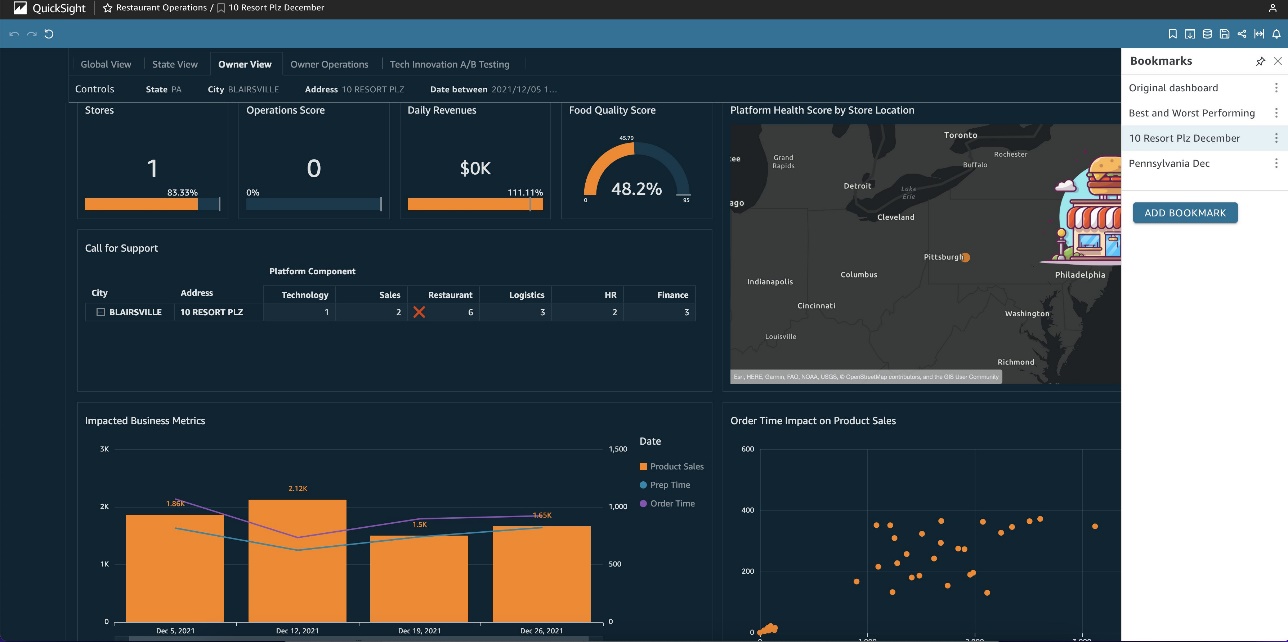

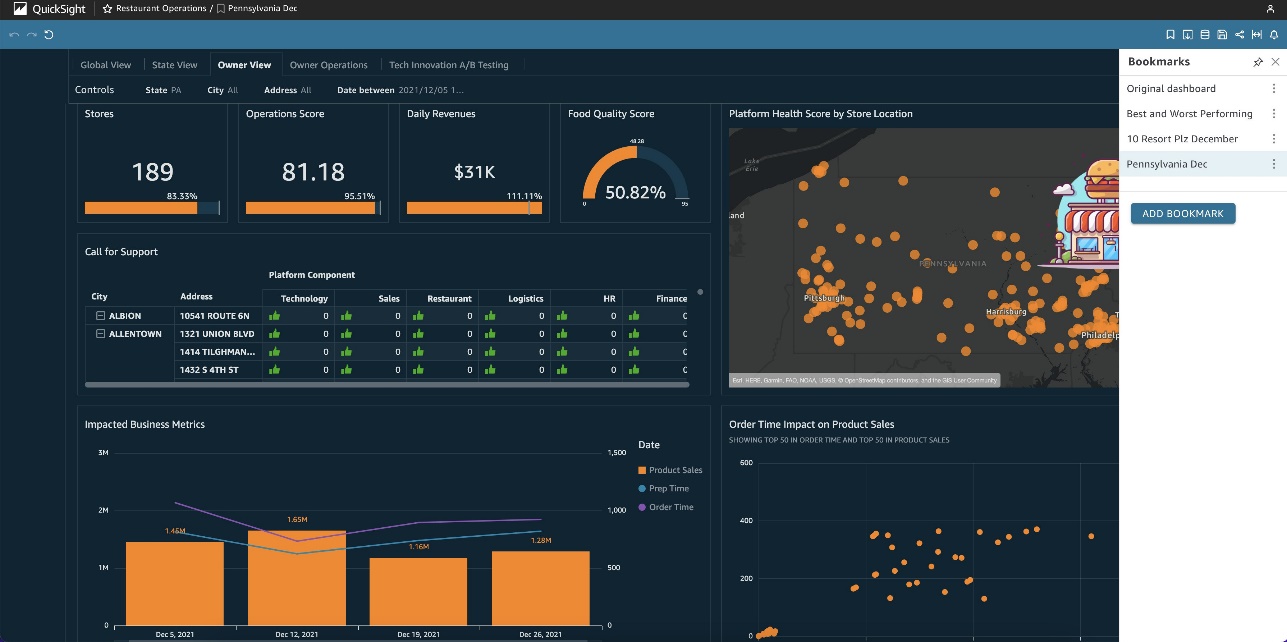

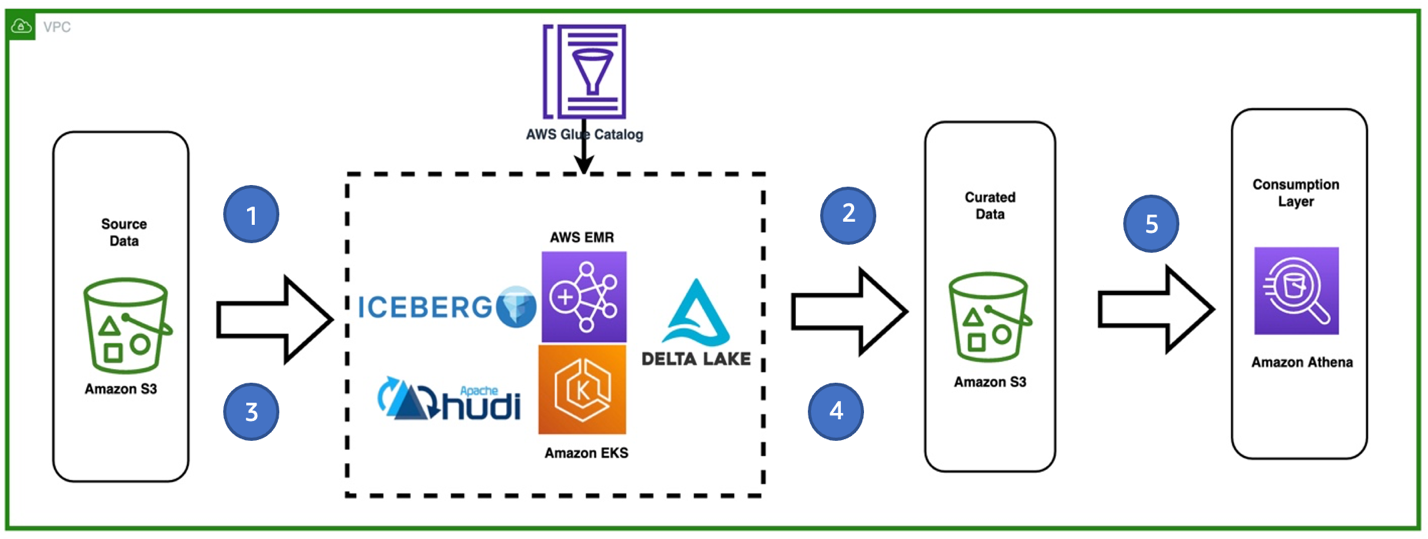

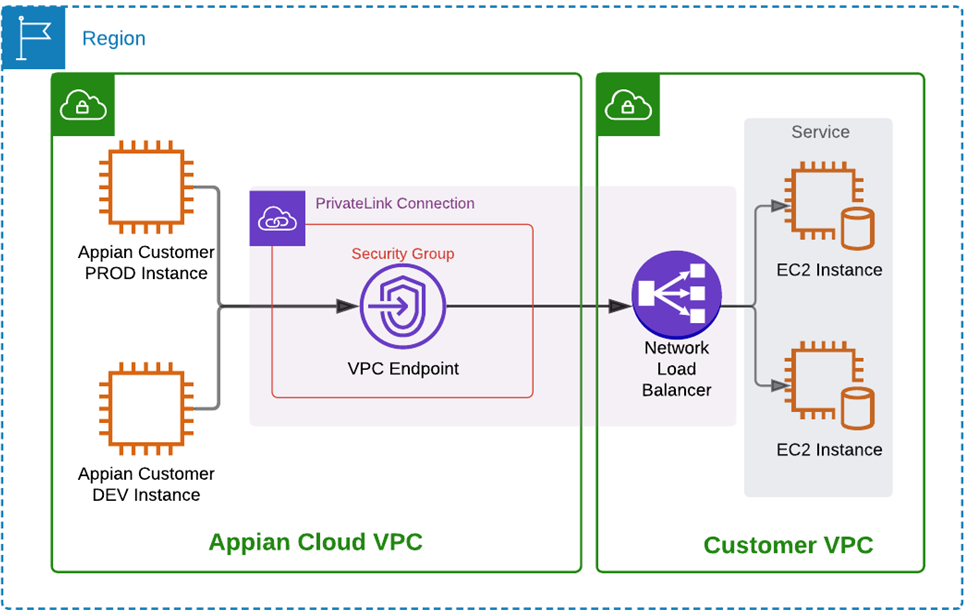

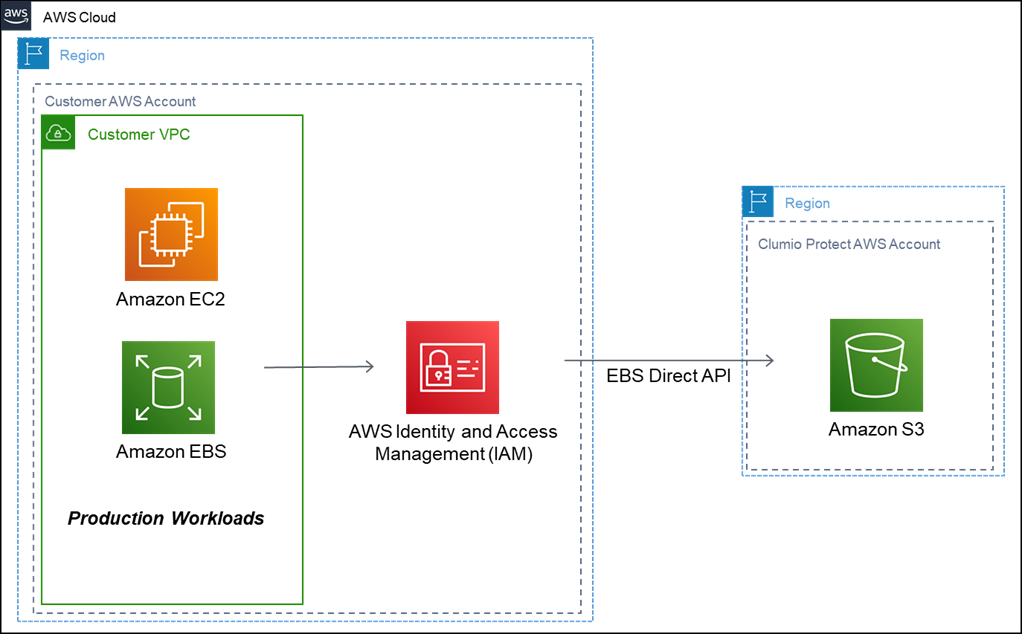

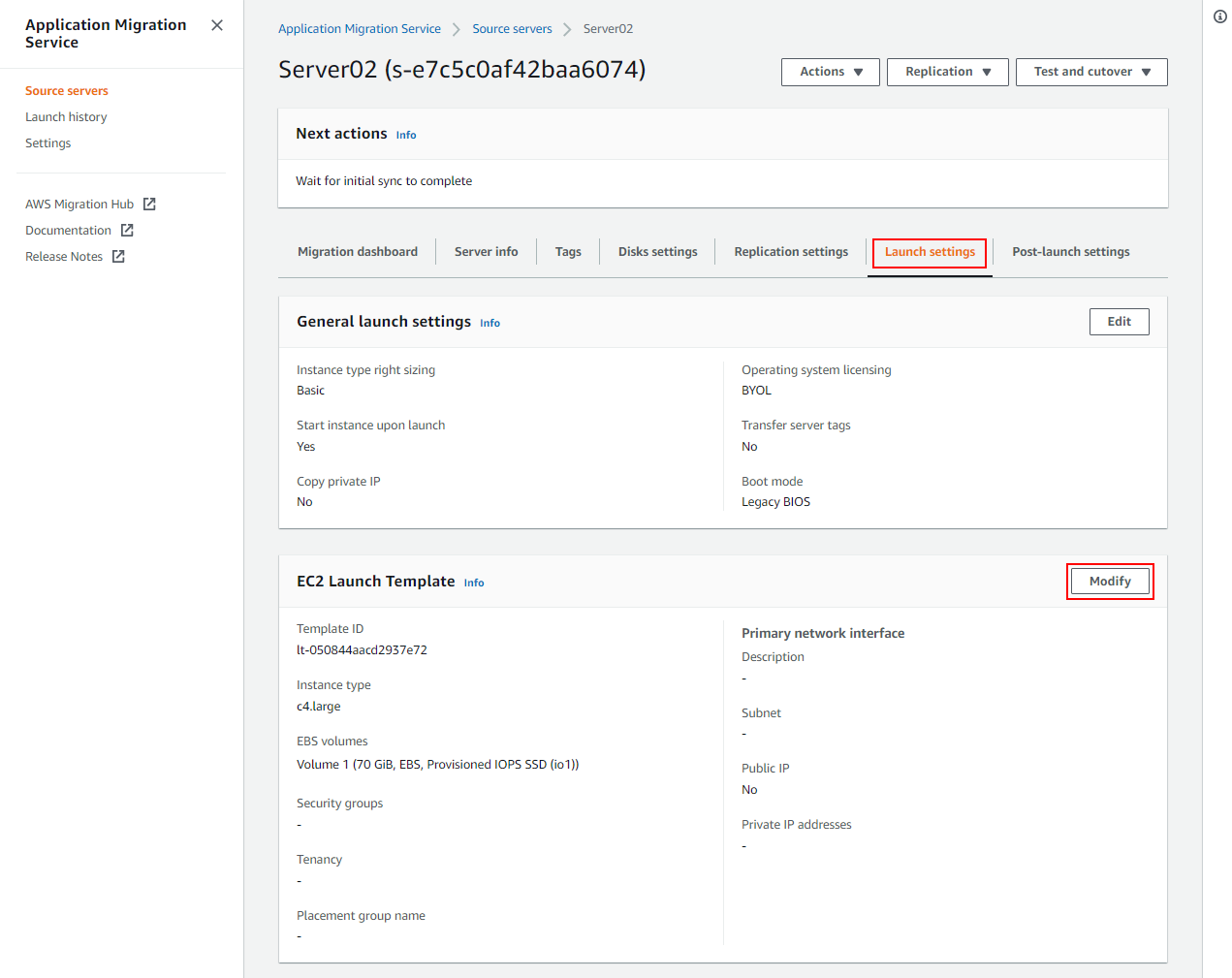

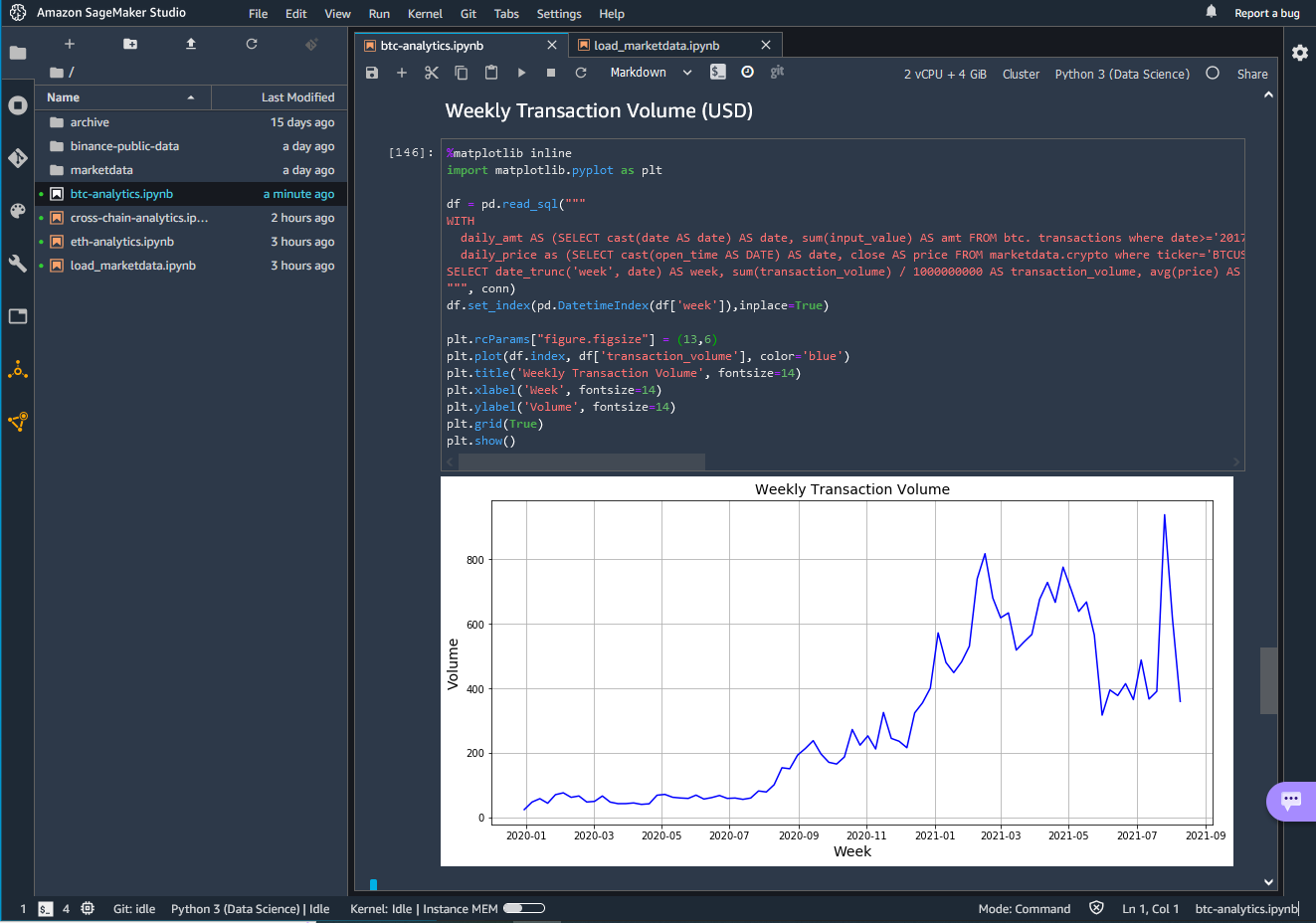

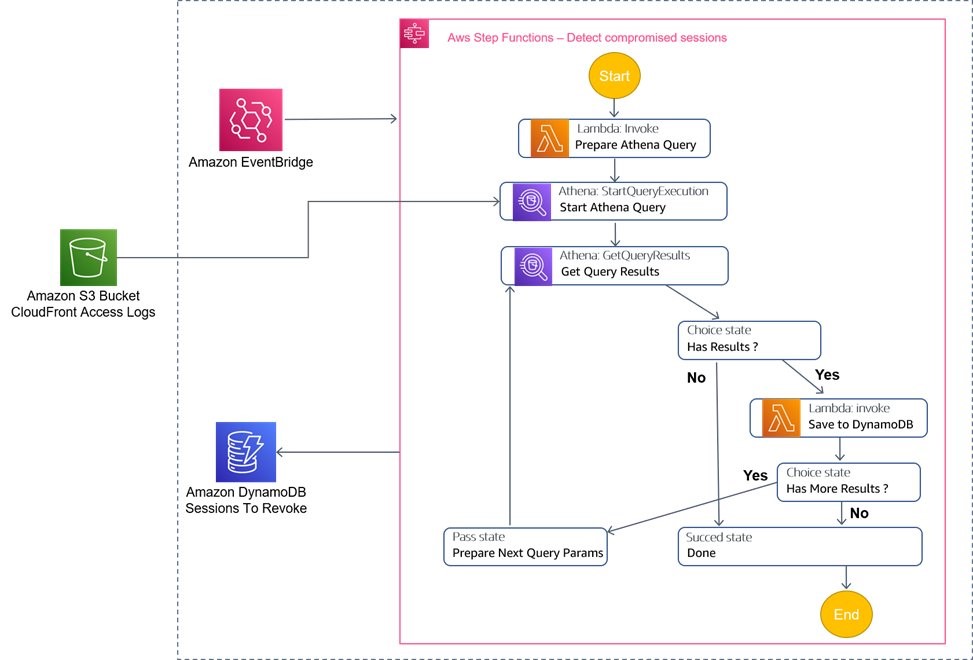

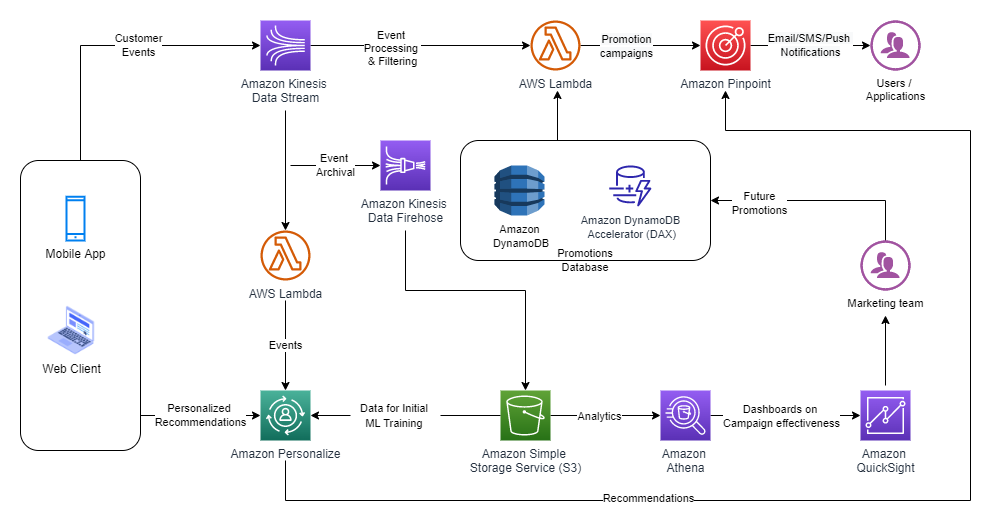

Architecture

Figure 1. The Architecture diagram for the Amplify application showing the interaction with the S3 bucket and Chrome scripts

Walkthrough

In this application we create a post build script that modifies the manifest file on each build. The post build script copies the file names of JS and CSS files needed for the content script to append HTML elements to a page.

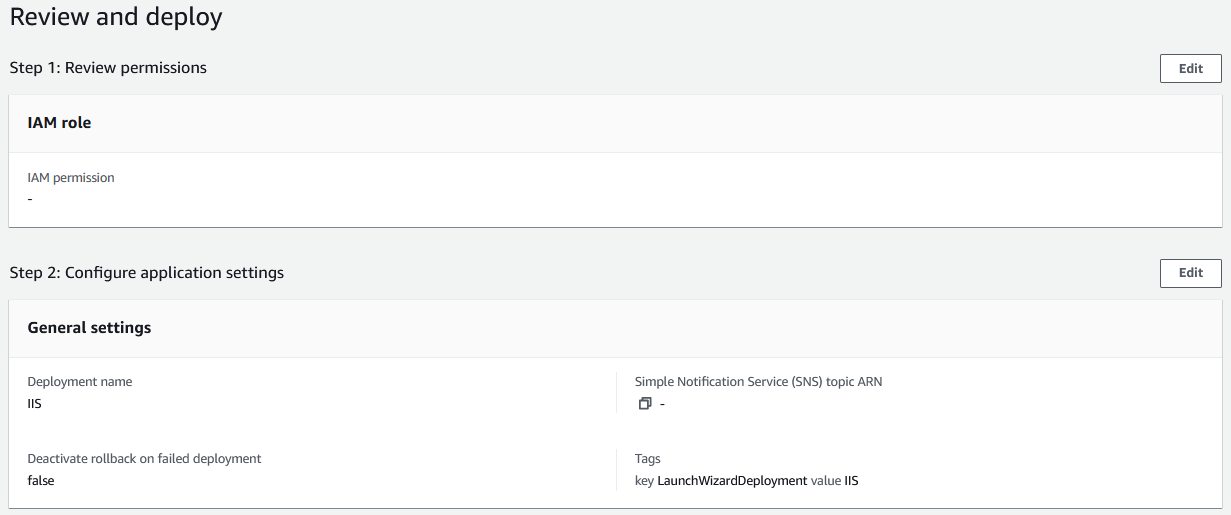

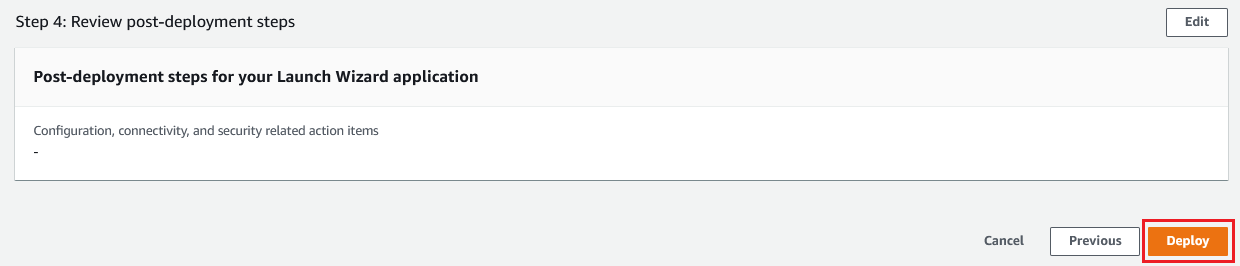

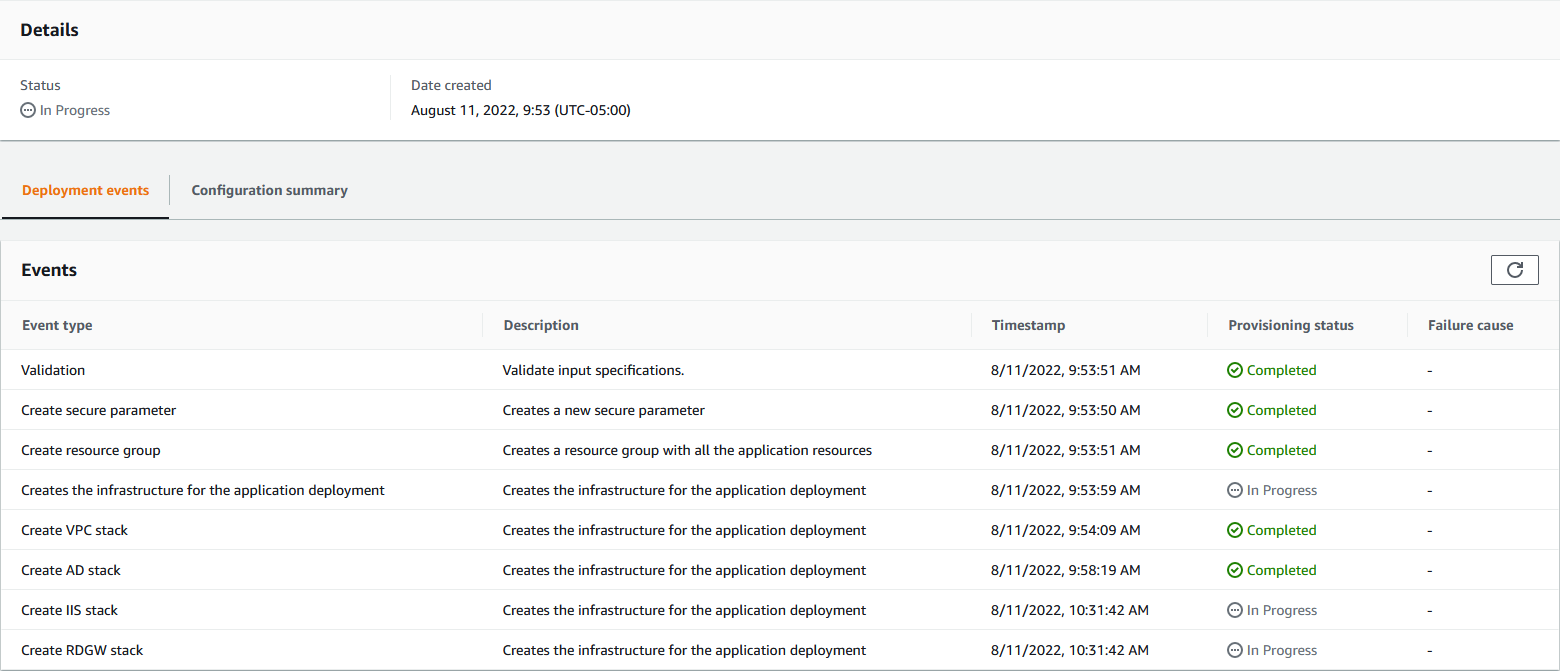

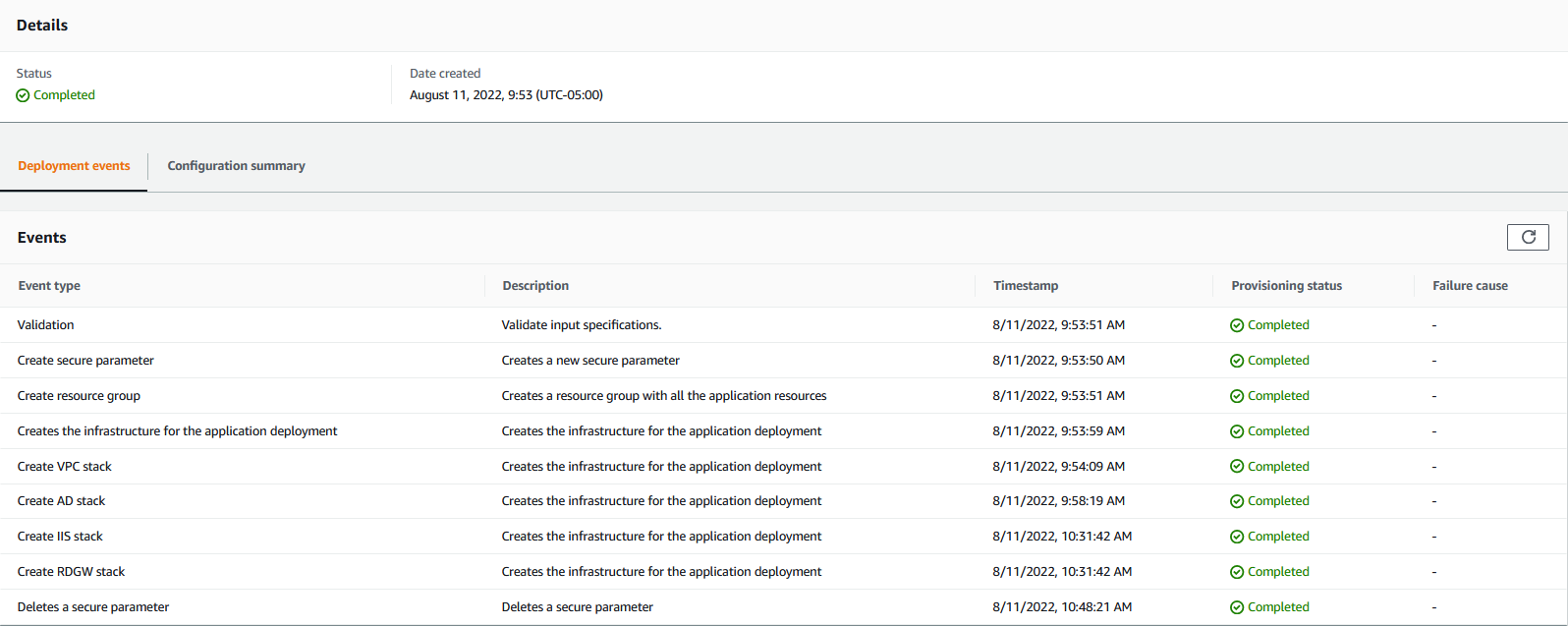

The following information outlines the steps needed in creating an Amplify chrome extension:

- Create a React project and install the necessary packages

- Create a Post build script and Manifest

- Add Amplify resources

- Add code to inject the HTML elements

- Test the application in the Chrome browser

Find the GitHub repository here.

Prerequisites

The following prerequisites are required for this post:

Setup a new React project

Let’s create a react project and install the necessary packages by running the following commands:

npx create-react-app AmplifyExtension cd AmplifyExtension

Next, we’ll install packages necessary for the application, such as Amplify CLI, Amplify UI, and tiny-glob packages. We use the Amplify UI and tiny-glob package, as this enables us to decrease development time. The scripts and UI components can be replaced with your preferred elements.

npm install -g @aws-amplify/cli npm install aws-amplify @aws-amplify/ui-react npm install tiny-glob

Create a Post build script and Add Manifest

Create a folder named scripts at the root of the React application.

In the scripts folder, create a file called postbuild.js and paste the following code:

const fs = require("fs");

const path = require('path')

const glob = require("tiny-glob");

const manifest = require("../public/manifest.json");

async function getFileNames(pattern) {

const files = await glob(`build/static${pattern}`)

return files.map(file => path.posix.relative('build', file.split(path.sep).join(path.posix.sep)));

}

async function main() {

const js = await getFileNames('/js/**/*.js')

const css = await getFileNames('/css/**/*.css')

const logo = await getFileNames('/media/logo*.svg')

const newManifest = {

...manifest,-

content_scripts: [

{

...manifest.content_scripts[0],

js,

css,

},

],

web_accessible_resources: [

{

...manifest.web_accessible_resources[0],

resources: [...css, ...logo],

},

],

};

console.log('WRITING', path.resolve("./build/manifest.json"))

fs.writeFileSync(

path.resolve("./build/manifest.json"),

JSON.stringify(newManifest, null, 2),

'utf8'

);

}

main();

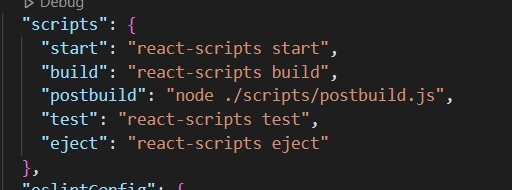

Open the package.json file present at the root of the project and add the following to the scripts block:

"postbuild": "node ./scripts/postbuild.js"

The end result should appear as follows:

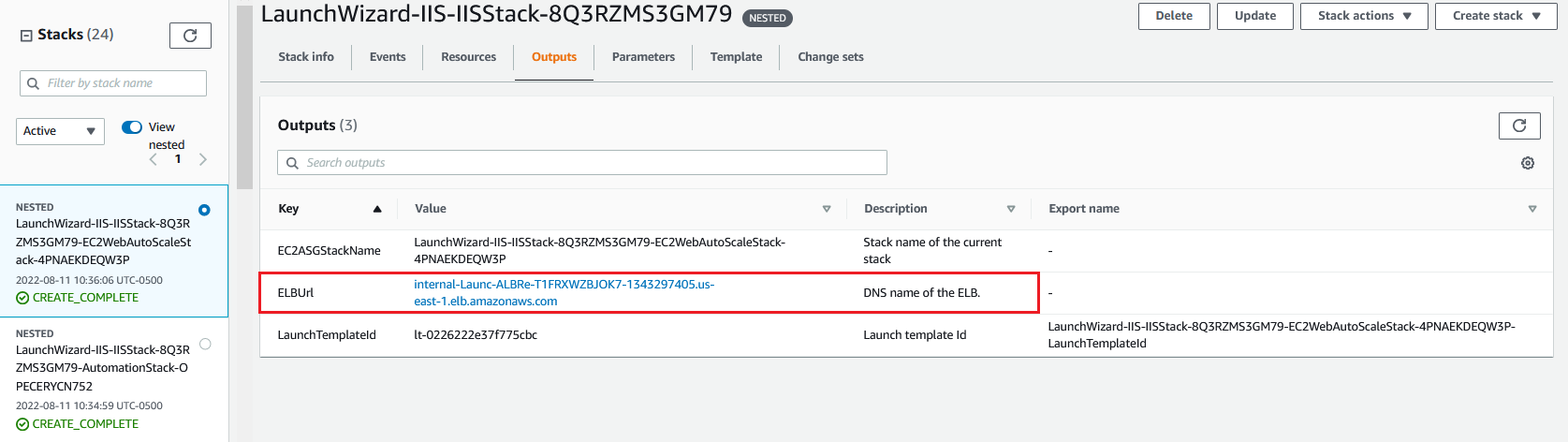

Figure 2. Picture showing the end result for package.json file

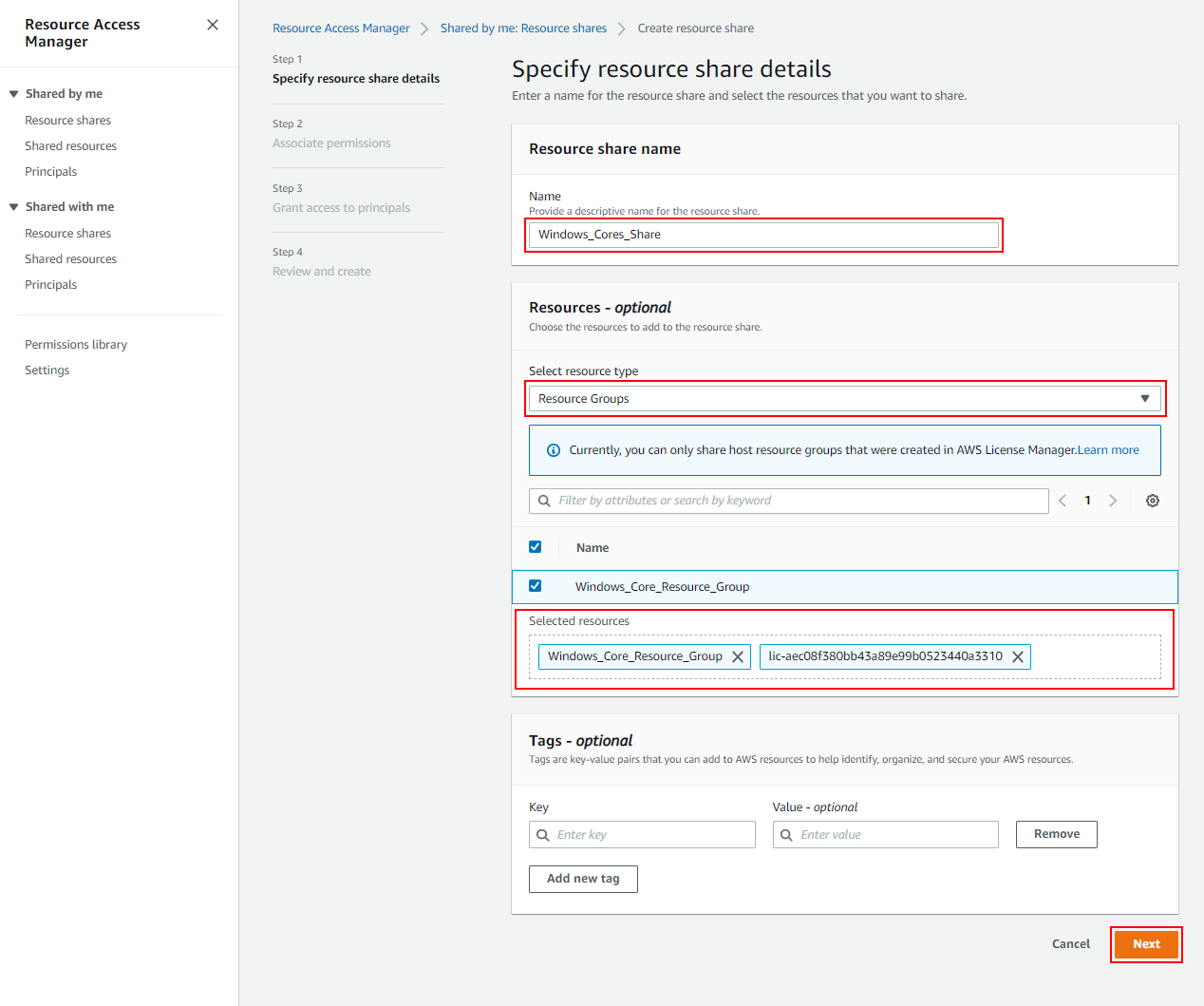

Add the following to the manifest.json present in the public folder at the root of the application:

{

"manifest_version": 3,

"name": "React Content Script",

"version": "0.0.1",

"content_scripts": [

{

"matches": ["https://docs.amplify.aws/"],

"run_at": "document_end",

"js": [],

"css": [],

"media": []

}

],

"web_accessible_resources": [

{

"resources": [],

"matches": ["<all_urls>"]

}

]

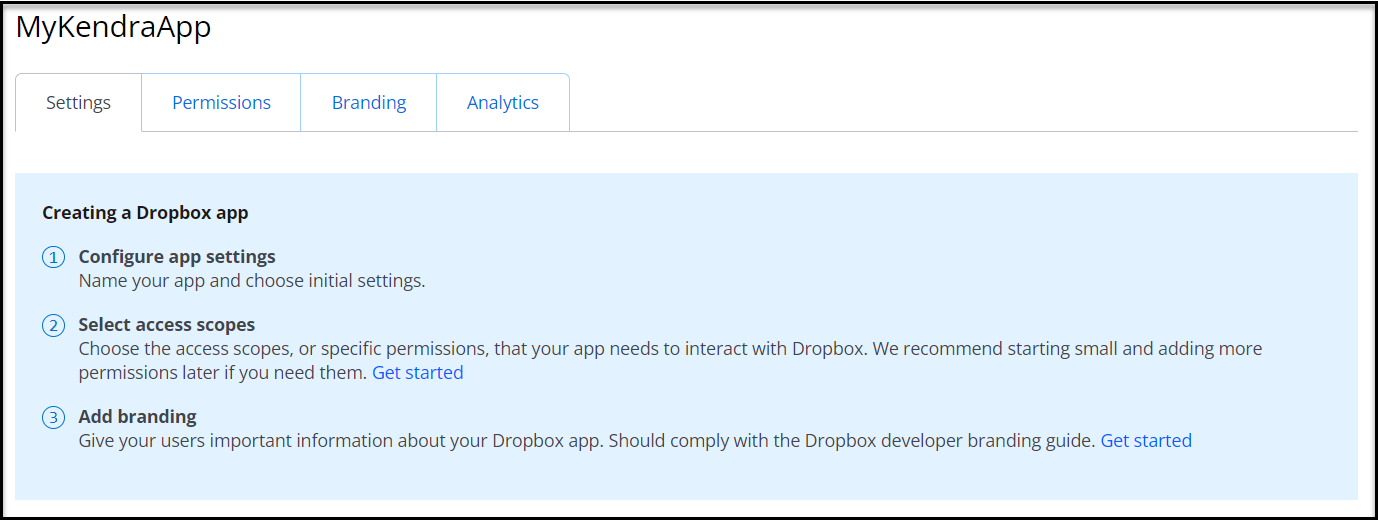

}Add Amplify resources

Let’s initialize the application with Amplify CLI. Run the command:

amplify init

Select the prompts.

? Enter a name for the project: AmplifyExtension The following configuration will be applied: Project information | Name: AmplifyExtension | Environment: dev | Default editor: Visual Studio Code | App type: javascript | Javascript framework: react | Source Directory Path: src | Distribution Directory Path: build | Build Command: npm.cmd run-script build | Start Command: npm.cmd run-script start ? Initialize the project with the above configuration? (Y/n) Y

Next let’s add Cognito as the authenticator resource for login. Run the command:

amplify add auth

Select the following prompts:

Do you want to use the default authentication and security configuration? Default configuration How do you want users to be able to sign in? Username Do you want to configure advanced settings? No, I am done.

Let’s add an S3 bucket to our application. Run the command:

amplify add storage

Select the Prompts:

Select Content (Images, audio, video, etc.) for who should have access, select: Auth users only What kind of access do you want for Authenticated users? · create/update, read

To create the resources in the cloud, run the following command:

amplify push

Then, select yes when prompted.

Add code to inject the HTML elements

Add the following to the Index.js file present under the src folder:

import React from 'react';

import ReactDOM from 'react-dom';

import './index.css';

import App from './App';

import reportWebVitals from './reportWebVitals';

//find the body element

const body = document.querySelector('body');

//create div element

const app = document.createElement('div');

app.id = 'root';

if (body) {

body.prepend(app);

}

ReactDOM.render(

<React.StrictMode>

<App />

</React.StrictMode>,

document.getElementById('root')

);

reportWebVitals();

Next add the following to the App.js file in the src folder:

import logo from './logo.svg';

import './App.css';

import { Amplify } from 'aws-amplify';

import { withAuthenticator } from '@aws-amplify/ui-react';

import '@aws-amplify/ui-react/styles.css';

import { AmplifyS3Album } from '@aws-amplify/ui-react/legacy';

import awsExports from './aws-exports';

Amplify.configure(awsExports);

function App() {

return (

<div className="App">

<header className="App-header">

<p>Hello</p>

<AmplifyS3Album />

</header>

</div>

);

}

//withAuthenticator component

export default withAuthenticator(App);Test the Application in a Chrome browser

Run the following command to build the application:

npm run build

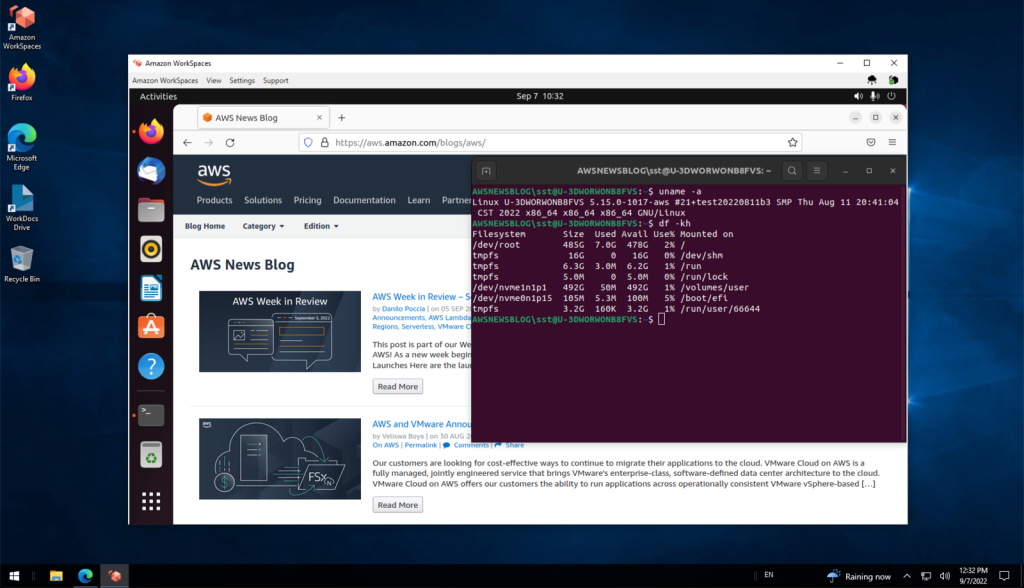

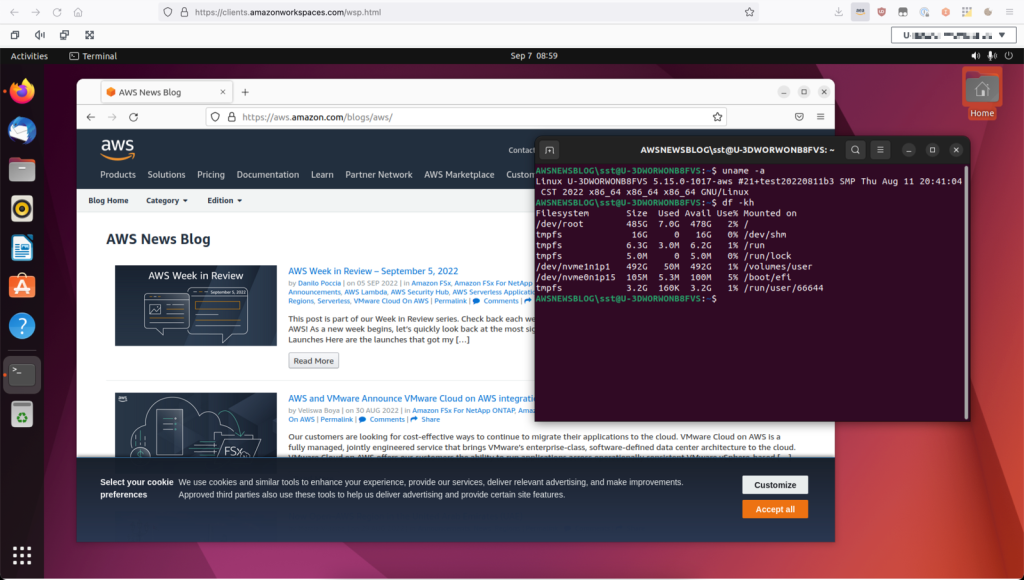

To test on a browser

- Open the Chrome browser.

- Open extensions in the Chrome settings.

- In the right side top of the screen, toggle developer mode on.

- Select load unpacked and select the location for the build folder of the application. This is present in the root of the React application and gets generated on a build command.

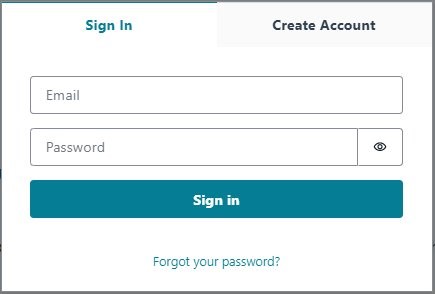

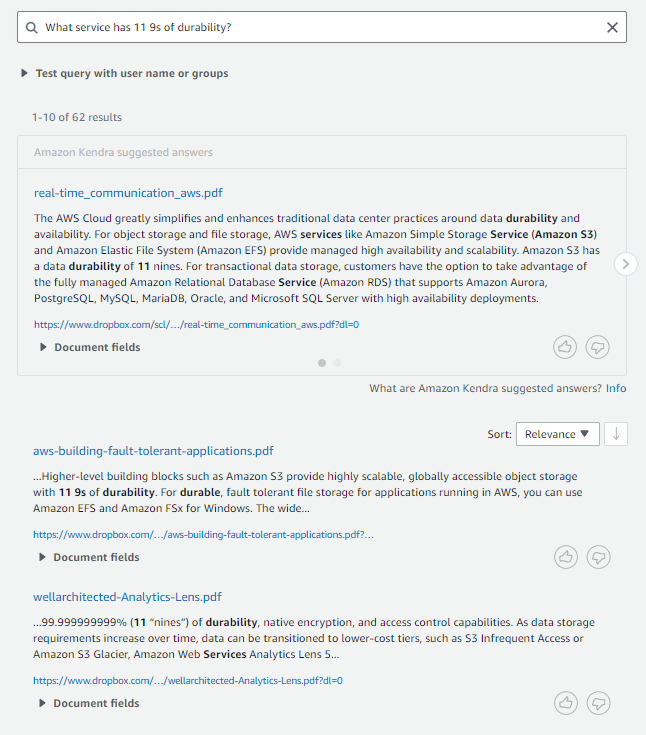

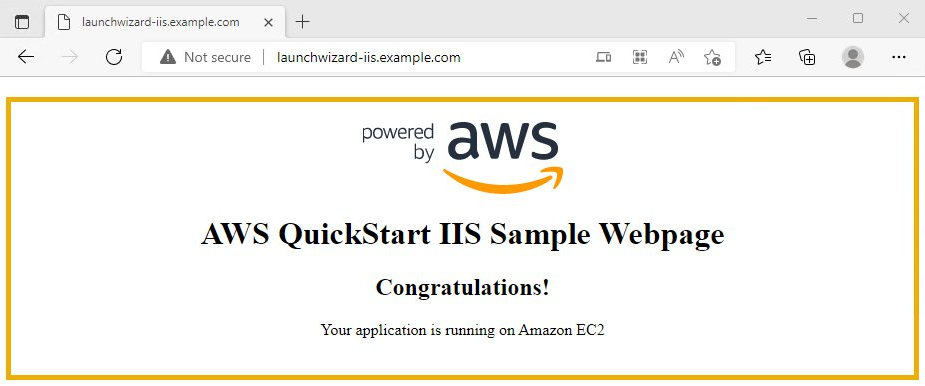

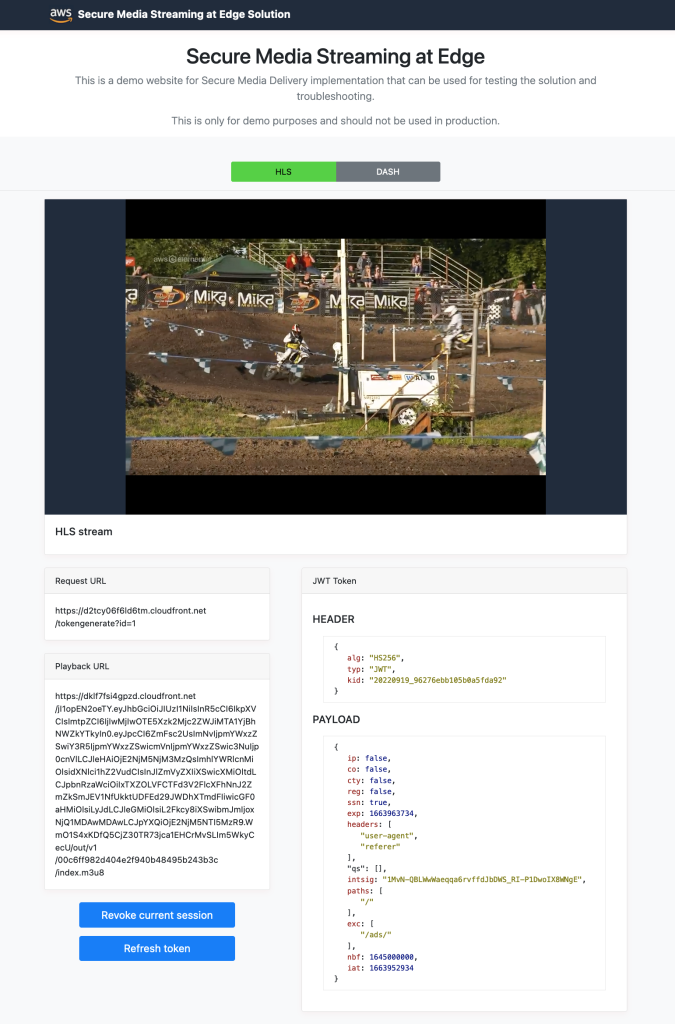

- Open https://docs.amplify.aws/ in a new tab and observe the following at the top of the page:

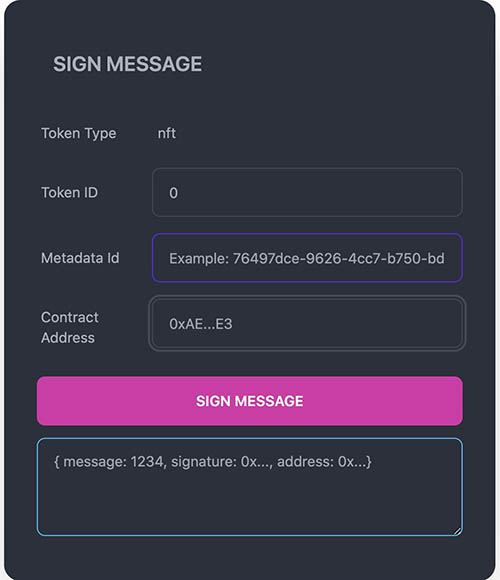

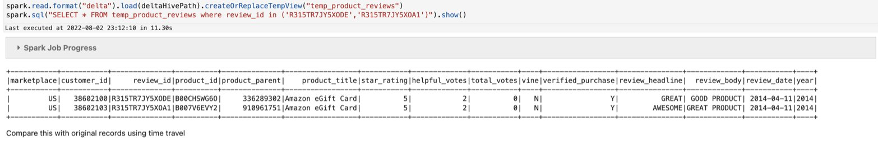

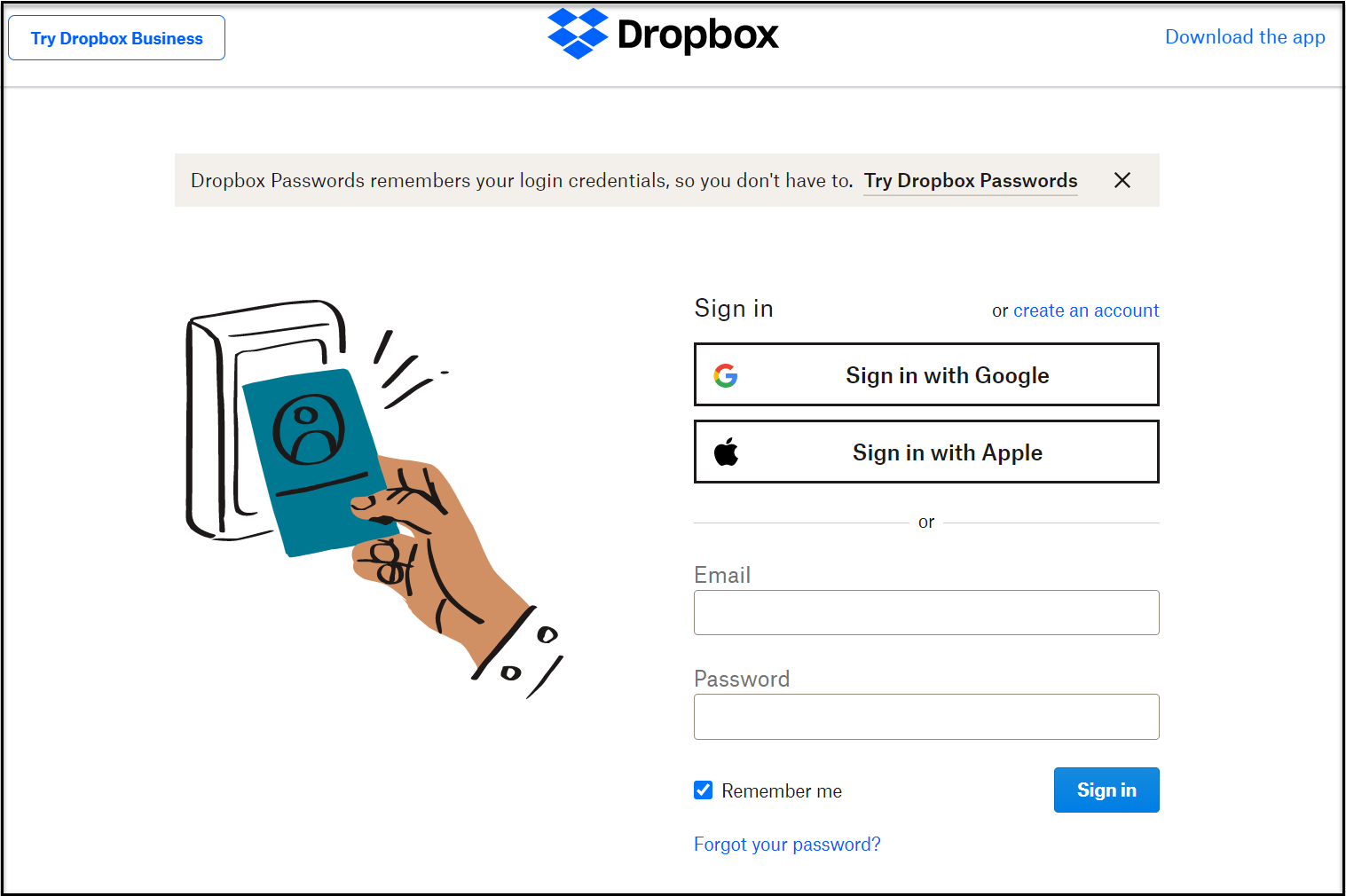

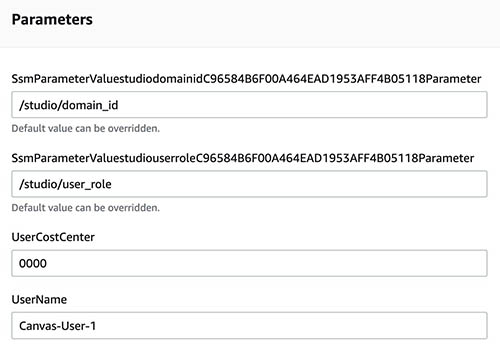

Figure 3. Cognito login screen to authenticate a user with email and password

- Create an account. Note that you must provide a valid email, as a verification code will be sent.

- Upon logging in, the page will load a Button at the top.

- Upon selecting the

Pick a filebutton and selecting a picture from your file system, we’ll observe the output as follows.

In the background, the picture is being uploaded to an S3 bucket and then displayed here.

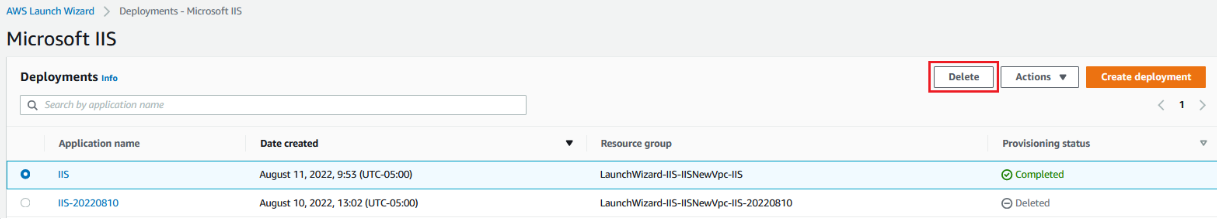

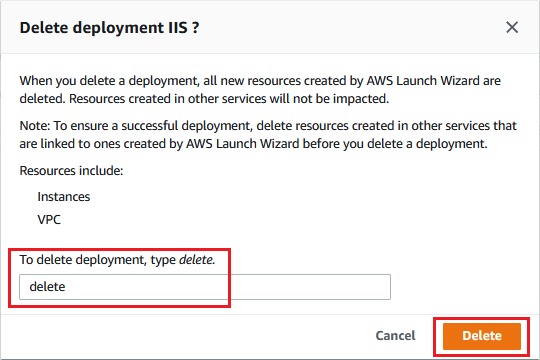

Cleanup resources

Now that you’ve finished this walkthrough solution, you can delete your Amplify application if you aren’t going to use it anymore. Run the following command in the terminal at the root of the project.

amplify delete

Note that this action can’t be undone. Once the project is deleted, you can’t recover it. If you need it again, then you must re-deploy it.

To prevent any data loss, Amplify doesn’t delete the Amazon S3 storage bucket created via the CLI. We can delete the bucket on the Amazon S3 console. Note that the bucket will contain the name of the application (for example: AmplifyExtension), and it can be used when searching.

Delete the extension from the Chrome browser.

Conclusion

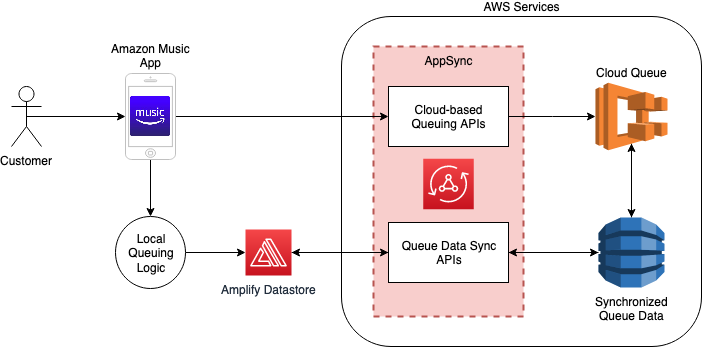

We can create Chrome extensions that allow users to consume Amplify resources, such as Amazon S3 storage and AWS Cognito. Furthermore, we can also utilize AppSync API capabilities to retrieve data from an Amazon DynamoDB Database.

A sample application can be found on Github here.

About the author:

]]>

Satyasovan Tripathy works as a Senior Specialist Solution Architect at AWS. He is situated in Bengaluru, India, and focuses on the AWS Digital User Engagement product portfolio. He enjoys reading and travelling outside of work.

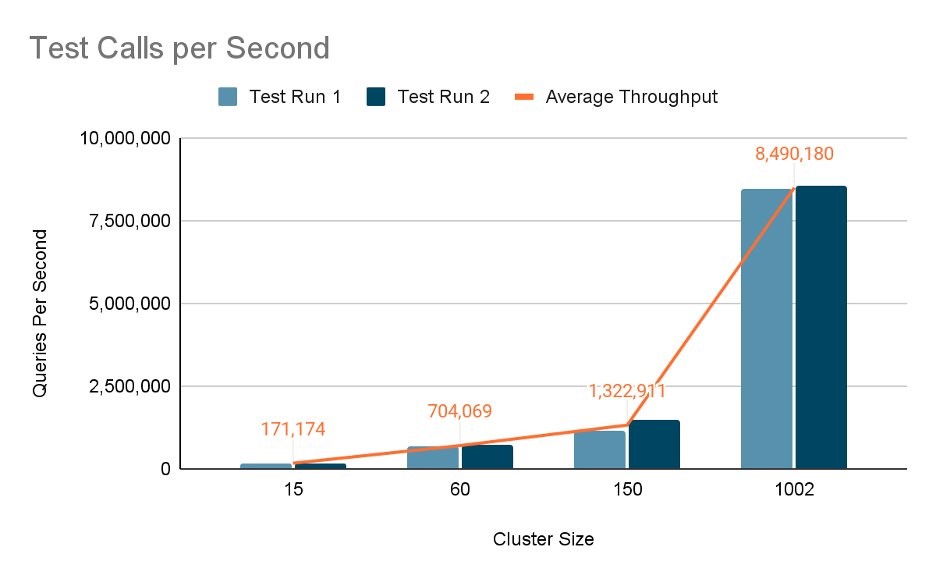

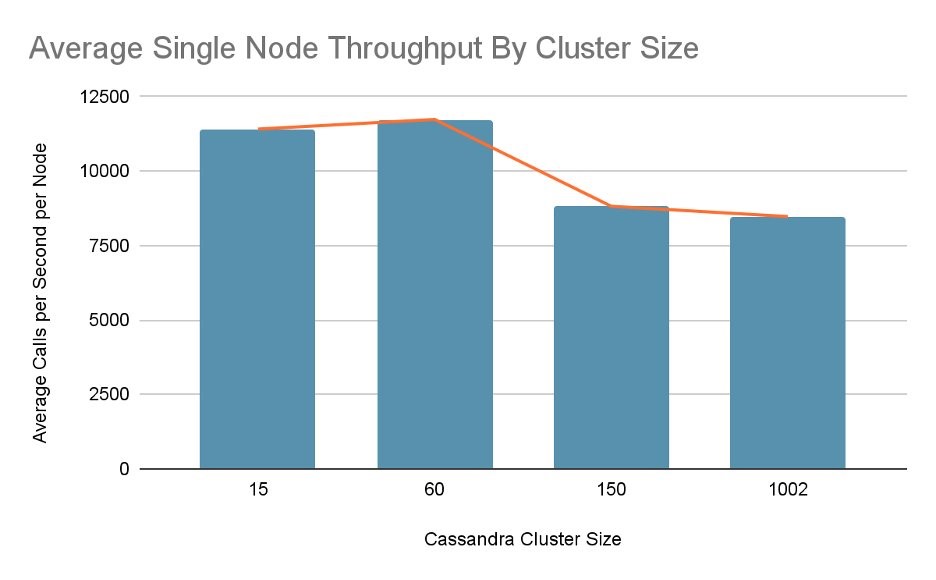

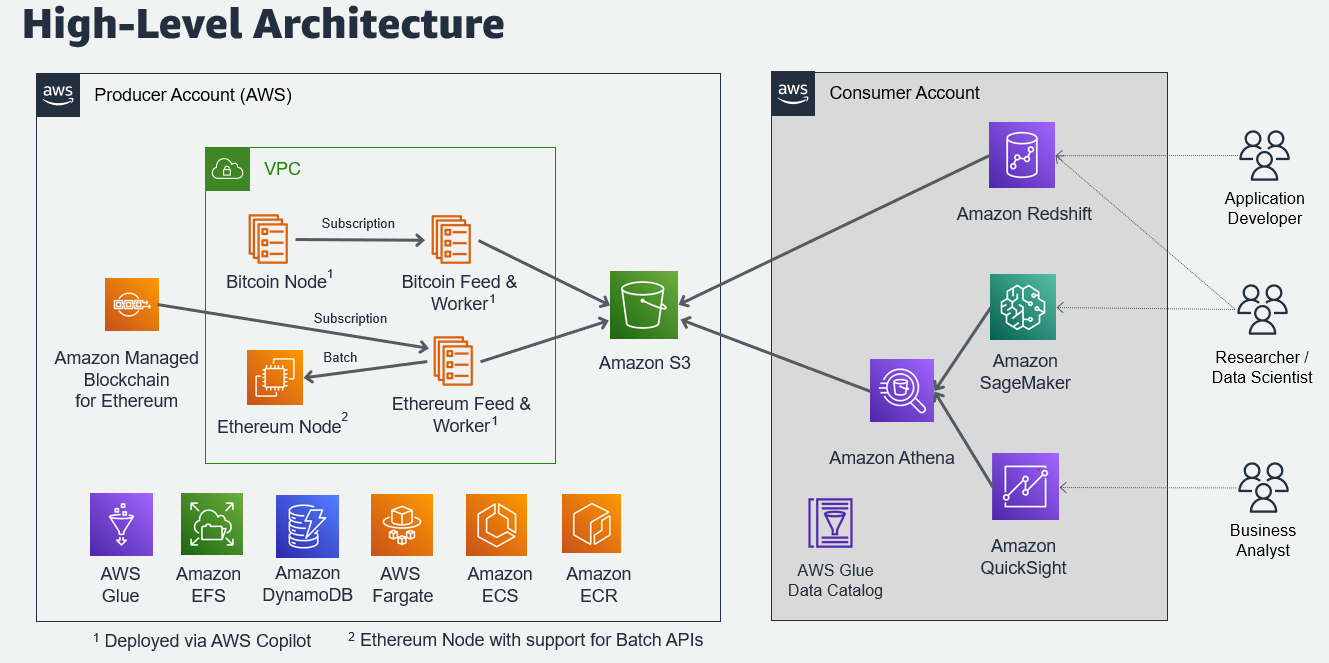

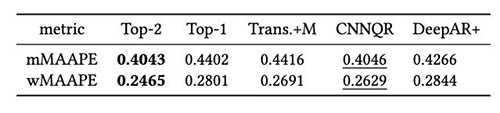

Satyasovan Tripathy works as a Senior Specialist Solution Architect at AWS. He is situated in Bengaluru, India, and focuses on the AWS Digital User Engagement product portfolio. He enjoys reading and travelling outside of work. Nikhil Khokhar is a Solutions Architect at AWS. He specializes in building and supporting data streaming solutions that help customers analyze and get value out of their data. In his free time, he makes use of his 3D printing skills to solve everyday problems.

Nikhil Khokhar is a Solutions Architect at AWS. He specializes in building and supporting data streaming solutions that help customers analyze and get value out of their data. In his free time, he makes use of his 3D printing skills to solve everyday problems.

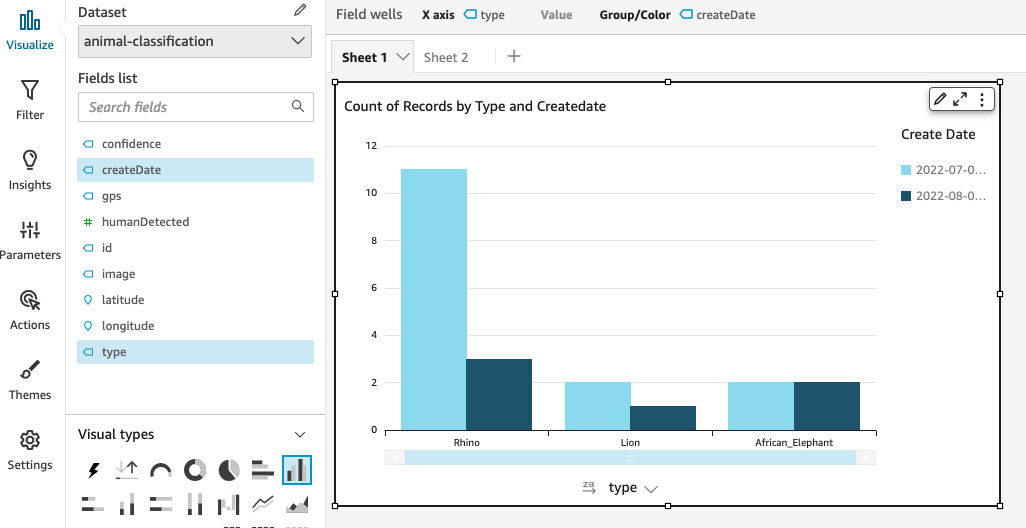

Avijit Goswami is a Principal Solutions Architect at AWS specialized in data and analytics. He supports AWS strategic customers in building high-performing, secure, and scalable data lake solutions on AWS using AWS managed services and open-source solutions. Outside of his work, Avijit likes to travel, hike in the San Francisco Bay Area trails, watch sports, and listen to music.

Avijit Goswami is a Principal Solutions Architect at AWS specialized in data and analytics. He supports AWS strategic customers in building high-performing, secure, and scalable data lake solutions on AWS using AWS managed services and open-source solutions. Outside of his work, Avijit likes to travel, hike in the San Francisco Bay Area trails, watch sports, and listen to music. Ajit Tandale is a Big Data Solutions Architect at Amazon Web Services. He helps AWS strategic customers accelerate their business outcomes by providing expertise in big data using AWS managed services and open-source solutions. Outside of work, he enjoys reading, biking, and watching sci-fi movies.

Ajit Tandale is a Big Data Solutions Architect at Amazon Web Services. He helps AWS strategic customers accelerate their business outcomes by providing expertise in big data using AWS managed services and open-source solutions. Outside of work, he enjoys reading, biking, and watching sci-fi movies. Thippana Vamsi Kalyan is a Software Development Engineer at AWS. He is passionate about learning and building highly scalable and reliable data analytics services and solutions on AWS. In his free time, he enjoys reading, being outdoors with his wife and kid, walking, and watching sports and movies.

Thippana Vamsi Kalyan is a Software Development Engineer at AWS. He is passionate about learning and building highly scalable and reliable data analytics services and solutions on AWS. In his free time, he enjoys reading, being outdoors with his wife and kid, walking, and watching sports and movies.

Divya Sharma is a Database Specialist Solutions architect at AWS, focusing on RDS/Aurora PostgreSQL. She has helped multiple enterprise customers move their databases to AWS, providing assistance on PostgreSQL performance and best practices.

Divya Sharma is a Database Specialist Solutions architect at AWS, focusing on RDS/Aurora PostgreSQL. She has helped multiple enterprise customers move their databases to AWS, providing assistance on PostgreSQL performance and best practices.

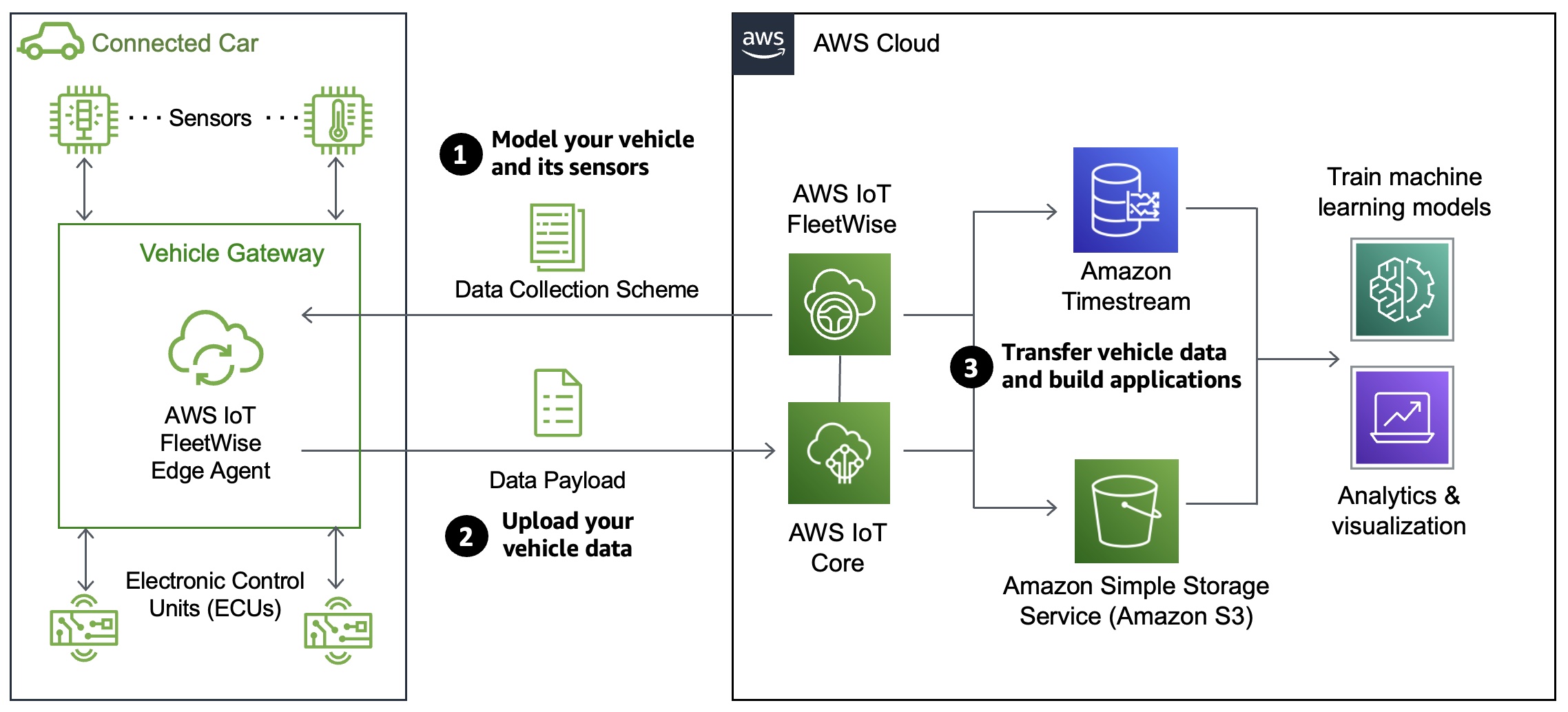

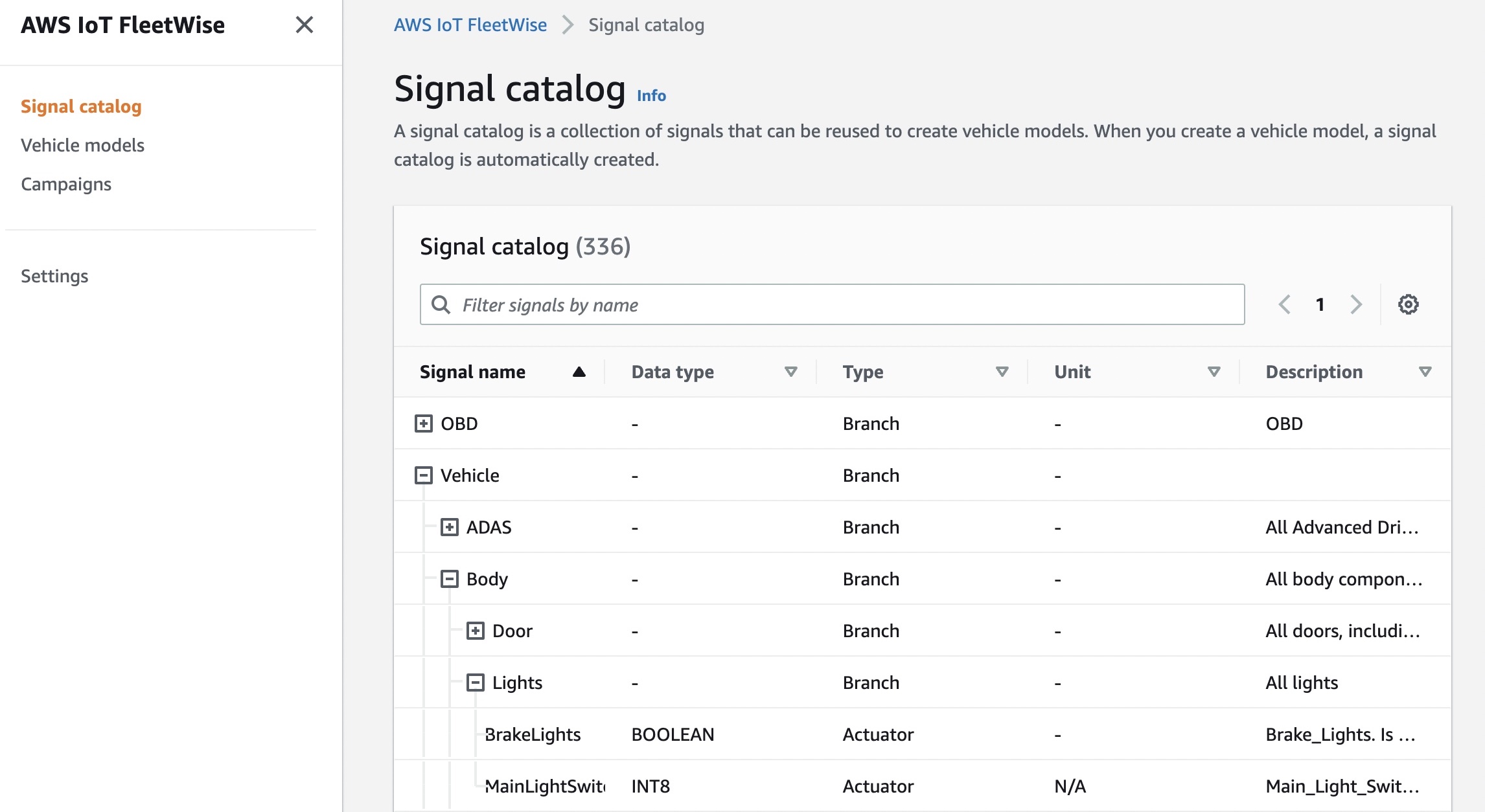

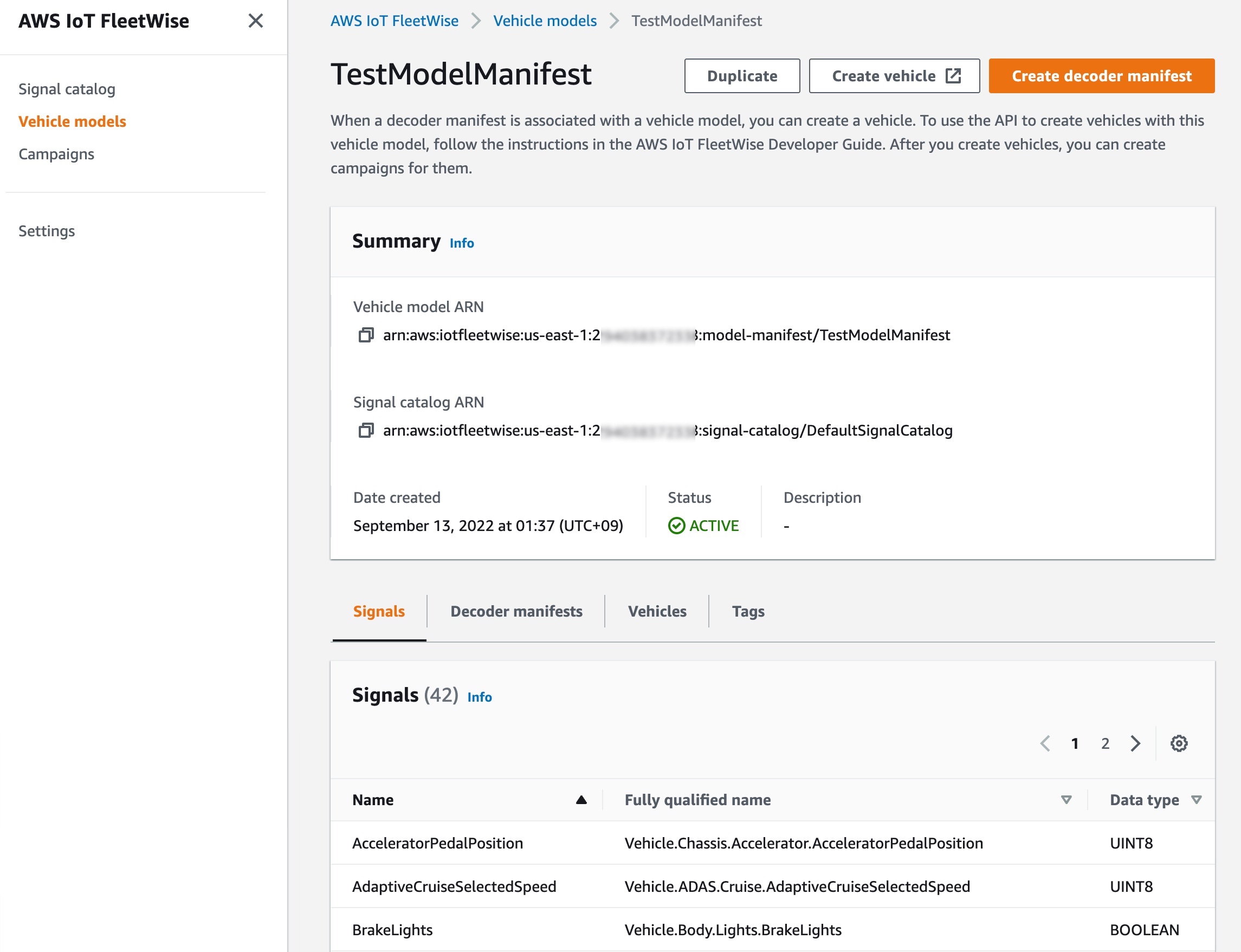

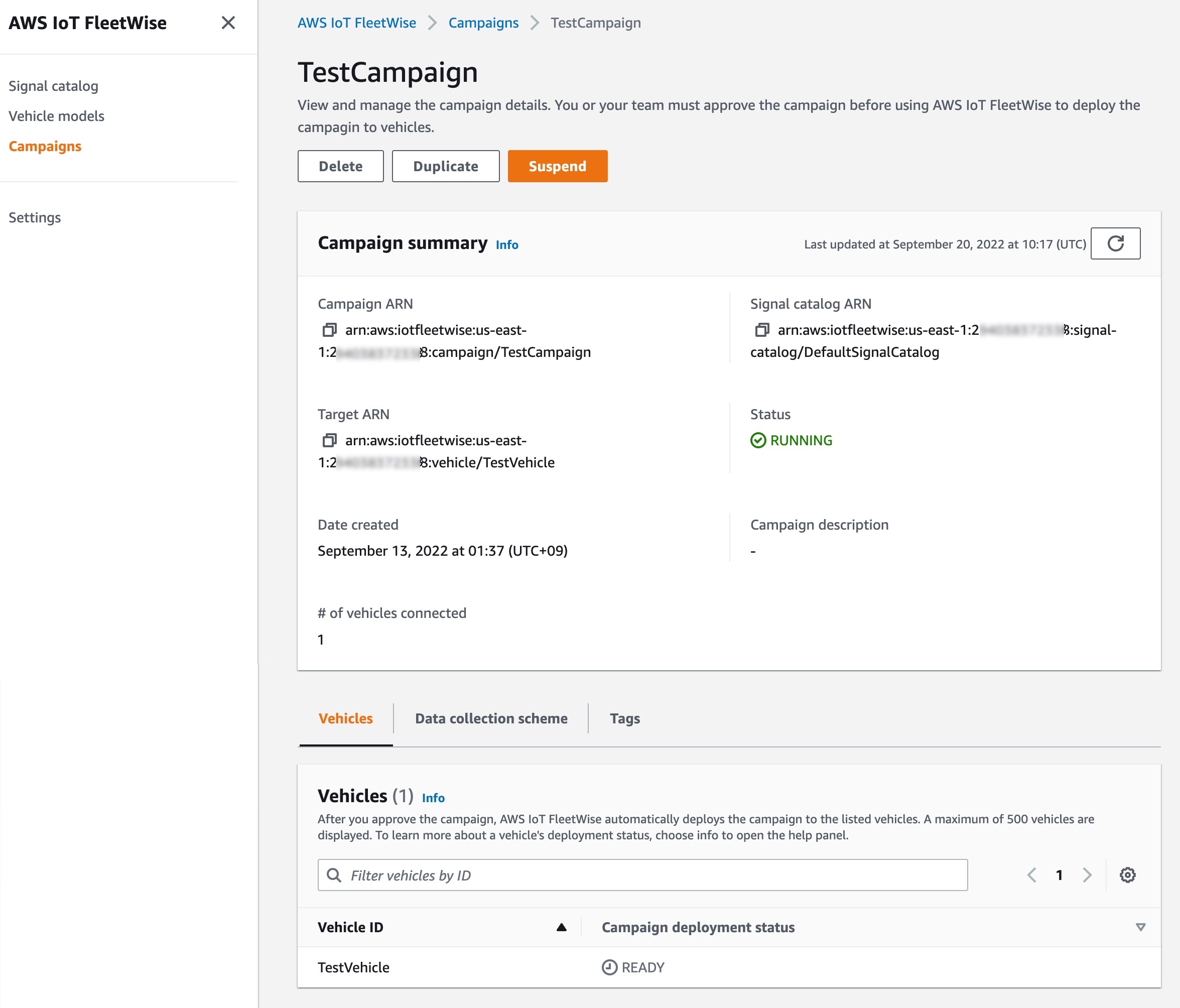

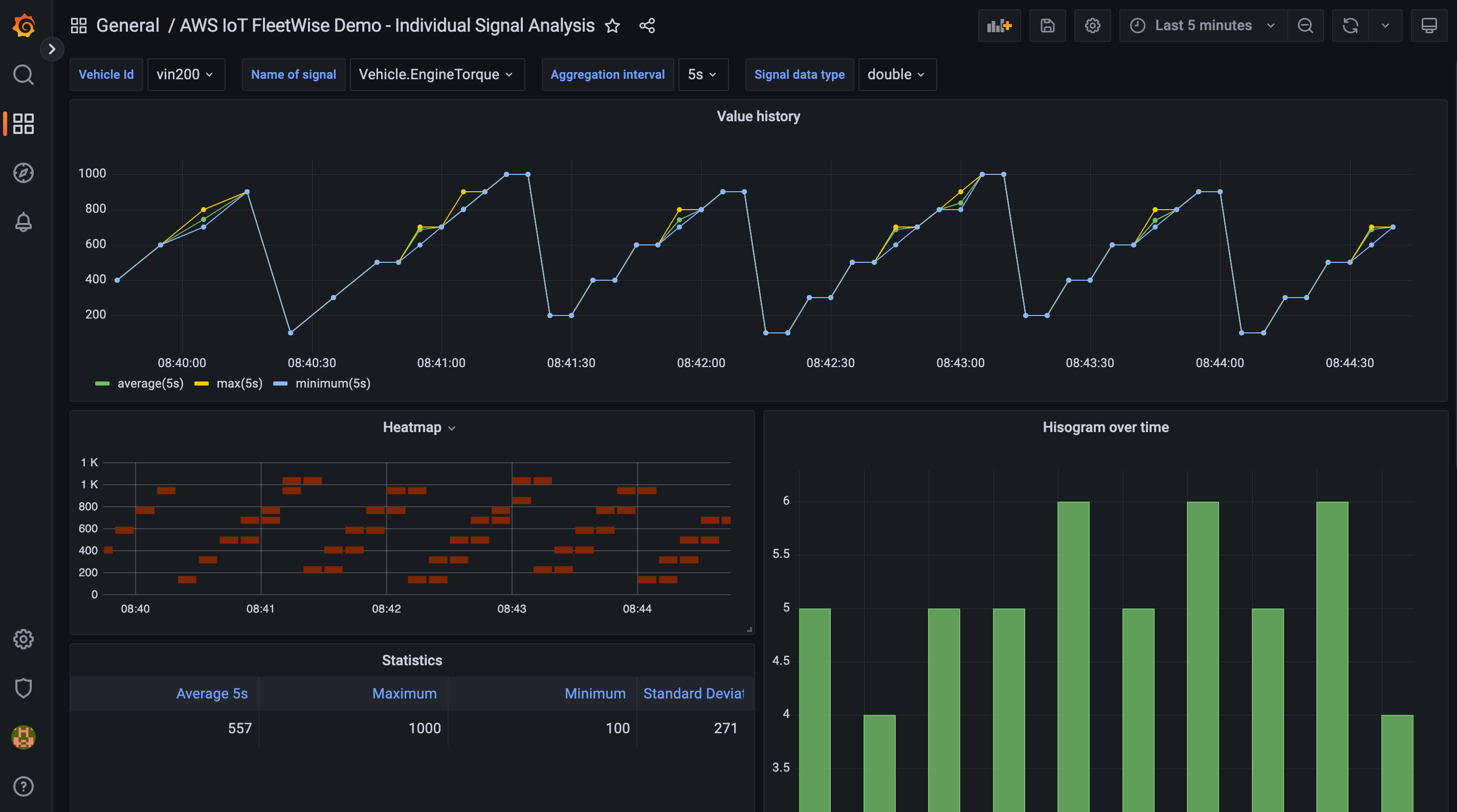

In spite of the fact that both vehicles encode the CAN message for the signal

In spite of the fact that both vehicles encode the CAN message for the signal

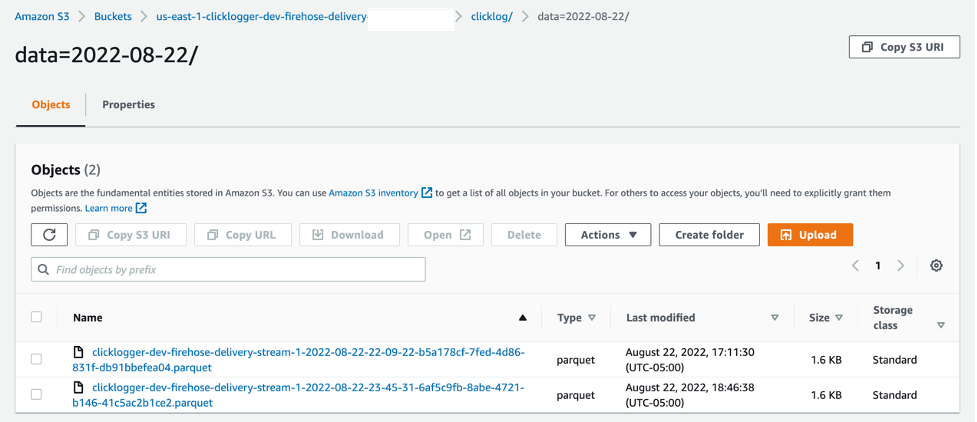

![If your records are successfully put into the stream you should see records in your S3 bucket generated by the one-click deployable solution titled “[Cloudformation-name]-analyticsbucket-[random string of numbers/letters]”.](https://d2908q01vomqb2.cloudfront.net/91032ad7bbcb6cf72875e8e8207dcfba80173f7c/2022/09/28/image6-1.png)

At AWS, we know that building a dream is best achieved by having support from people like you who want to get where you’re going as much as you do. To

At AWS, we know that building a dream is best achieved by having support from people like you who want to get where you’re going as much as you do. To

Jared Heywood is a Senior Business Development Manager at AWS. He is a global AI/ML specialist helping customers with no-code machine learning. He has worked in the AutoML space for the past 5 years and launched products at Amazon like Amazon SageMaker JumpStart and Amazon SageMaker Canvas.

Jared Heywood is a Senior Business Development Manager at AWS. He is a global AI/ML specialist helping customers with no-code machine learning. He has worked in the AutoML space for the past 5 years and launched products at Amazon like Amazon SageMaker JumpStart and Amazon SageMaker Canvas.

Martin Zoellner is an IT Specialist at BMW Group. His role in the project is Subject Matter Expert for DevOps and ETL/SW Architecture.

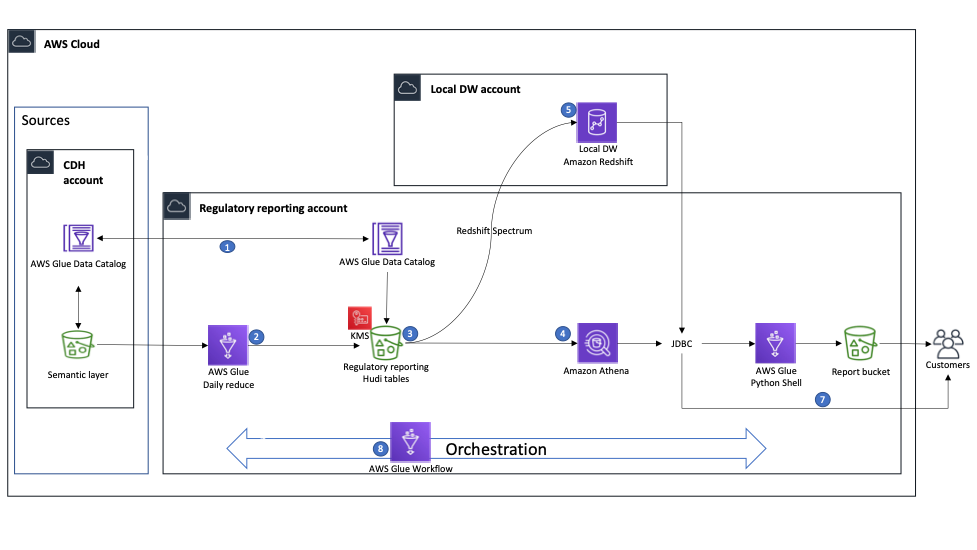

Martin Zoellner is an IT Specialist at BMW Group. His role in the project is Subject Matter Expert for DevOps and ETL/SW Architecture. Thomas Ehrlich is the functional maintenance manager of Regulatory Reporting application in one of the European BMW market.

Thomas Ehrlich is the functional maintenance manager of Regulatory Reporting application in one of the European BMW market. Veronika Bogusch is an IT Specialist at BMW. She initiated the rebuild of the Financial Services Batch Integration Layer via the Cloud Data Hub. The ingested data assets are the base for the Regulatory Reporting use case described in this article.

Veronika Bogusch is an IT Specialist at BMW. She initiated the rebuild of the Financial Services Batch Integration Layer via the Cloud Data Hub. The ingested data assets are the base for the Regulatory Reporting use case described in this article. George Komninos is a solutions architect for the Amazon Web Services (AWS) Data Lab. He helps customers convert their ideas to a production-ready data product. Before AWS, he spent three years at Alexa Information domain as a data engineer. Outside of work, George is a football fan and supports the greatest team in the world, Olympiacos Piraeus.

George Komninos is a solutions architect for the Amazon Web Services (AWS) Data Lab. He helps customers convert their ideas to a production-ready data product. Before AWS, he spent three years at Alexa Information domain as a data engineer. Outside of work, George is a football fan and supports the greatest team in the world, Olympiacos Piraeus. Rahul Shaurya is a Senior Big Data Architect with AWS Professional Services. He helps and works closely with customers building data platforms and analytical applications on AWS. Outside of work, Rahul loves taking long walks with his dog Barney.

Rahul Shaurya is a Senior Big Data Architect with AWS Professional Services. He helps and works closely with customers building data platforms and analytical applications on AWS. Outside of work, Rahul loves taking long walks with his dog Barney.

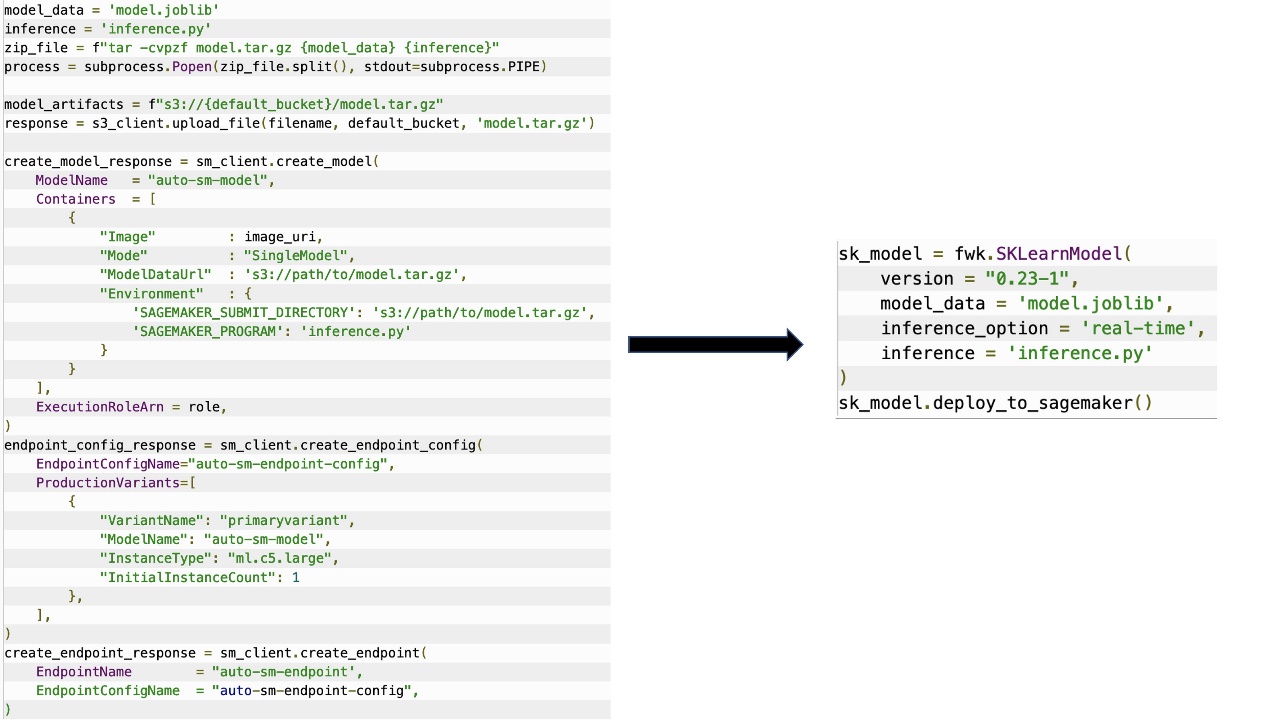

Kirit Thadaka is an ML Solutions Architect working in the Amazon SageMaker Service SA team. Prior to joining AWS, Kirit spent time working in early stage AI startups followed by some time in consulting in various roles in AI research, MLOps, and technical leadership.

Kirit Thadaka is an ML Solutions Architect working in the Amazon SageMaker Service SA team. Prior to joining AWS, Kirit spent time working in early stage AI startups followed by some time in consulting in various roles in AI research, MLOps, and technical leadership. Ram Vegiraju is a ML Architect with the SageMaker Service team. He focuses on helping customers build and optimize their AI/ML solutions on Amazon SageMaker. In his spare time, he loves traveling and writing.

Ram Vegiraju is a ML Architect with the SageMaker Service team. He focuses on helping customers build and optimize their AI/ML solutions on Amazon SageMaker. In his spare time, he loves traveling and writing.

For example,

For example,

AWS Partner spotlight

AWS Partner spotlight

For this specific example, we use the following information for our Azure SQL Instance:

For this specific example, we use the following information for our Azure SQL Instance:

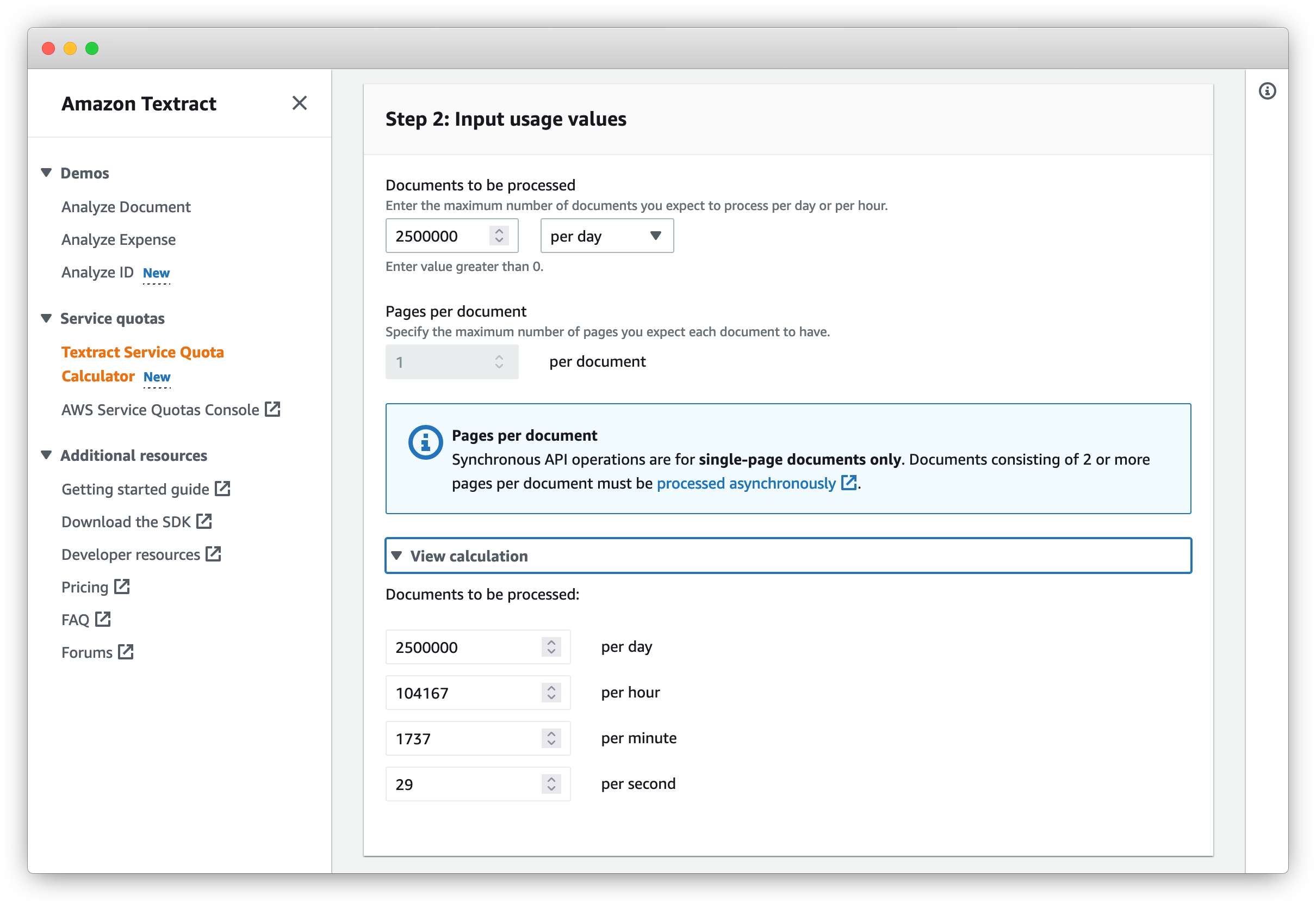

Anjan Biswas is a Senior AI Services Solutions Architect with focus on AI/ML and Data Analytics. Anjan is part of the world-wide AI services team and works with customers to help them understand, and develop solutions to business problems with AI and ML. Anjan has over 14 years of experience working with global supply chain, manufacturing, and retail organizations and is actively helping customers get started and scale on AWS AI services.

Anjan Biswas is a Senior AI Services Solutions Architect with focus on AI/ML and Data Analytics. Anjan is part of the world-wide AI services team and works with customers to help them understand, and develop solutions to business problems with AI and ML. Anjan has over 14 years of experience working with global supply chain, manufacturing, and retail organizations and is actively helping customers get started and scale on AWS AI services. Shashwat Sapre is a Senior Technical Product Manager with the Amazon Textract team. He is focused on building machine learning-based services for AWS customers. In his spare time, he likes reading about new technologies, traveling and exploring different cuisines.

Shashwat Sapre is a Senior Technical Product Manager with the Amazon Textract team. He is focused on building machine learning-based services for AWS customers. In his spare time, he likes reading about new technologies, traveling and exploring different cuisines.

As we continue to celebrate

As we continue to celebrate

To migrate your Hive Metastore to the Data Catalog, you can use the

To migrate your Hive Metastore to the Data Catalog, you can use the

Jianwei Li is Senior Analytics Specialist TAM. He provides consultant service for AWS enterprise support customers to design and build modern data platform. He has more than 10 years experience in big data and analytics domain. In his spare time, he like running and hiking.

Jianwei Li is Senior Analytics Specialist TAM. He provides consultant service for AWS enterprise support customers to design and build modern data platform. He has more than 10 years experience in big data and analytics domain. In his spare time, he like running and hiking. Narayanan Venkateswaran is an Engineer in the AWS EMR group. He works on developing Hive in EMR. He has over 17 years of work experience in the industry across several companies including Sun Microsystems, Microsoft, Amazon and Oracle. Narayanan also holds a PhD in databases with focus on horizontal scalability in relational stores.

Narayanan Venkateswaran is an Engineer in the AWS EMR group. He works on developing Hive in EMR. He has over 17 years of work experience in the industry across several companies including Sun Microsystems, Microsoft, Amazon and Oracle. Narayanan also holds a PhD in databases with focus on horizontal scalability in relational stores. Partha Sarathi is an Analytics Specialist TAM – at AWS based in Sydney, Australia. He brings 15+ years of technology expertise and helps Enterprise customers optimize Analytics workloads. He has extensively worked on both on-premise and cloud Bigdata workloads along with various ETL platform in his previous roles. He also actively works on conducting proactive operational reviews around the Analytics services like Amazon EMR, Redshift, and OpenSearch.

Partha Sarathi is an Analytics Specialist TAM – at AWS based in Sydney, Australia. He brings 15+ years of technology expertise and helps Enterprise customers optimize Analytics workloads. He has extensively worked on both on-premise and cloud Bigdata workloads along with various ETL platform in his previous roles. He also actively works on conducting proactive operational reviews around the Analytics services like Amazon EMR, Redshift, and OpenSearch. Krish is an Enterprise Support Manager responsible for leading a team of specialists in EMEA focused on BigData & Analytics, Databases, Networking and Security. He is also an expert in helping enterprise customers modernize their data platforms and inspire them to implement operational best practices. In his spare time, he enjoys spending time with his family, travelling, and video games.

Krish is an Enterprise Support Manager responsible for leading a team of specialists in EMEA focused on BigData & Analytics, Databases, Networking and Security. He is also an expert in helping enterprise customers modernize their data platforms and inspire them to implement operational best practices. In his spare time, he enjoys spending time with his family, travelling, and video games.

Jyothi Goudar is Partner Solutions Architect Manager at AWS. She works closely with global system integrator partner to enable and support customers moving their workloads to AWS.

Jyothi Goudar is Partner Solutions Architect Manager at AWS. She works closely with global system integrator partner to enable and support customers moving their workloads to AWS. Jay Rao is a Principal Solutions Architect at AWS. He enjoys providing technical and strategic guidance to customers and helping them design and implement solutions on AWS.

Jay Rao is a Principal Solutions Architect at AWS. He enjoys providing technical and strategic guidance to customers and helping them design and implement solutions on AWS.

João Moura is an AI/ML Specialist Solutions Architect at Amazon Web Services. He is mostly focused on NLP use cases and helping customers optimize deep learning model training and deployment. He is also an active proponent of ML-specialized hardware and low-code ML solutions.

João Moura is an AI/ML Specialist Solutions Architect at Amazon Web Services. He is mostly focused on NLP use cases and helping customers optimize deep learning model training and deployment. He is also an active proponent of ML-specialized hardware and low-code ML solutions. Weiqi Zhang is a Software Engineering Manager at Search M5, where he works on productizing large-scale models for Amazon machine learning applications. His interests include information retrieval and machine learning infrastructure.

Weiqi Zhang is a Software Engineering Manager at Search M5, where he works on productizing large-scale models for Amazon machine learning applications. His interests include information retrieval and machine learning infrastructure. Jason Carlson is a Software Engineer for developing machine learning pipelines to help reduce the number of stolen search impressions due to customer-perceived duplicates. He mostly works with Apache Spark, AWS, and PyTorch to help deploy and feed/process data for ML models. In his free time, he likes to read and go on runs.

Jason Carlson is a Software Engineer for developing machine learning pipelines to help reduce the number of stolen search impressions due to customer-perceived duplicates. He mostly works with Apache Spark, AWS, and PyTorch to help deploy and feed/process data for ML models. In his free time, he likes to read and go on runs. Shaohui Xi is an SDE at the Search Query Understanding Infra team. He leads the effort for building large-scale deep learning online inference services with low latency and high availability. Outside of work, he enjoys skiing and exploring good foods.

Shaohui Xi is an SDE at the Search Query Understanding Infra team. He leads the effort for building large-scale deep learning online inference services with low latency and high availability. Outside of work, he enjoys skiing and exploring good foods. Zhuoqi Zhang is a Software Development Engineer at the Search Query Understanding Infra team. He works on building model serving frameworks to improve latency and throughput for deep learning online inference services. Outside of work, he likes playing basketball, snowboarding, and driving.

Zhuoqi Zhang is a Software Development Engineer at the Search Query Understanding Infra team. He works on building model serving frameworks to improve latency and throughput for deep learning online inference services. Outside of work, he likes playing basketball, snowboarding, and driving. Haowei Sun is a software engineer in the Search Query Understanding Infra team. She works on designing APIs and infrastructure supporting deep learning online inference services. Her interests include service API design, infrastructure setup, and maintenance. Outside of work, she enjoys running, hiking, and traveling.

Haowei Sun is a software engineer in the Search Query Understanding Infra team. She works on designing APIs and infrastructure supporting deep learning online inference services. Her interests include service API design, infrastructure setup, and maintenance. Outside of work, she enjoys running, hiking, and traveling. Jaspreet Singh is an Applied Scientist on the M5 team, where he works on large-scale foundation models to improve the customer shopping experience. His research interests include multi-task learning, information retrieval, and representation learning.

Jaspreet Singh is an Applied Scientist on the M5 team, where he works on large-scale foundation models to improve the customer shopping experience. His research interests include multi-task learning, information retrieval, and representation learning. Shruti Koparkar is a Senior Product Marketing Manager at AWS. She helps customers explore, evaluate, and adopt EC2 accelerated computing infrastructure for their machine learning needs.

Shruti Koparkar is a Senior Product Marketing Manager at AWS. She helps customers explore, evaluate, and adopt EC2 accelerated computing infrastructure for their machine learning needs.

![: The Kubecost dashboard, which shows monthly savings of $1,058.56, monthly Kubernetes costs of $1,627.16, and a 3.9% cost efficiency. The dashboard shows how costs are allocated across various Kubernetes resources.]](https://d2908q01vomqb2.cloudfront.net/972a67c48192728a34979d9a35164c1295401b71/2022/09/21/couldops_1085_3.png)